[An essay in my series on the Future of Robotics and Artificial Intelligence.]

We are surrounded by hysteria about the future of Artificial Intelligence and Robotics. There is hysteria about how powerful they will become how quickly, and there is hysteria about what they will do to jobs.

As I write these words on September 2nd, 2017, I note just two news stories from the last 48 hours.

Yesterday, in the New York Times, Oren Etzioni, chief executive of the Allen Institute for Artificial Intelligence, wrote an opinion piece titled How to Regulate Artificial Intelligence where he does a good job of arguing against the hysteria that Artificial Intelligence is an existential threat to humanity. He proposes rather sensible ways of thinking about regulations for Artificial Intelligence deployment, rather than the chicken little “the sky is falling” calls for regulation of research and knowledge that we have seen from people who really, really, should know a little better.

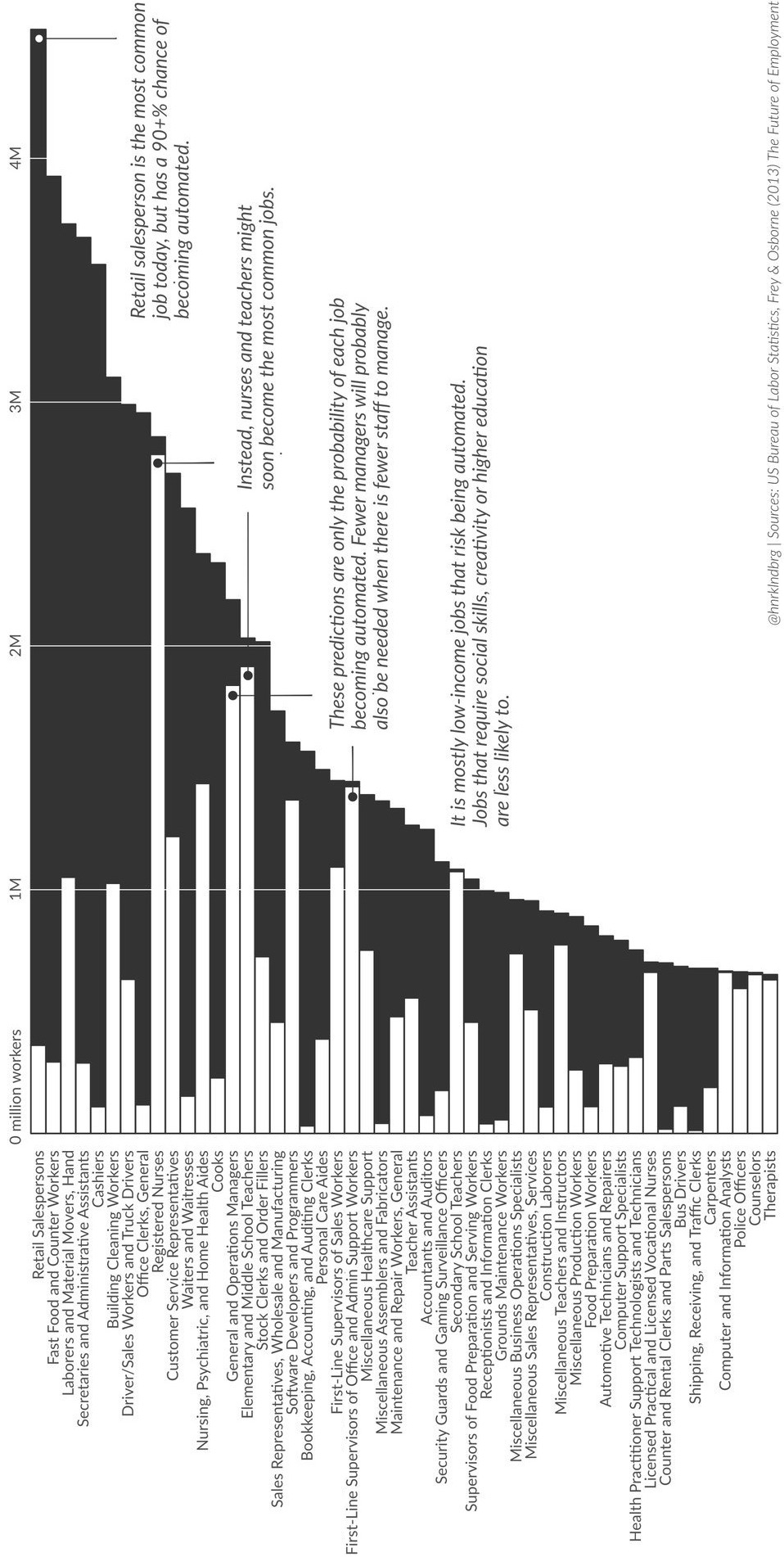

Today, there is a story in Market Watch that robots will take half of today’s jobs in 10 to 20 years. It even has a graphic to prove the numbers.

The claims are ludicrous. [I try to maintain professional language, but sometimes…] For instance, it appears to say that we will go from 1 million grounds and maintenance workers in the US to only 50,000 in 10 to 20 years, because robots will take over those jobs. How many robots are currently operational in those jobs? ZERO. How many realistic demonstrations have there been of robots working in this arena? ZERO. Similar stories apply to all the other job categories in this diagram where it is suggested that there will be massive disruptions of 90%, and even as much as 97%, in jobs that currently require physical presence at some particular job site.

Mistaken predictions lead to fear of things that are not going to happen. Why are people making mistakes in predictions about Artificial Intelligence and robotics, so that Oren Etzioni, I, and others, need to spend time pushing back on them?

Below I outline seven ways of thinking that lead to mistaken predictions about robotics and Artificial Intelligence. We find instances of these ways of thinking in many of the predictions about our AI future. I am going to first list the four such general topic areas of predictions that I notice, along with a brief assessment of where I think they currently stand.

A. Artificial General Intelligence. Research on AGI is an attempt to distinguish a thinking entity from current day AI technology such as Machine Learning. Here the idea is that we will build autonomous agents that operate much like beings in the world. This has always been my own motivation for working in robotics and AI, but the recent successes of AI are not at all like this.

Some people think that all AI is an instance of AGI, but as the word “general” would imply, AGI aspires to be much more general than current AI. Interpreting current AI as an instance of AGI makes it seem much more advanced and all encompassing that it really is.

Modern day AGI research is not doing at all well on being either general or getting to an independent entity with an ongoing existence. It mostly seems stuck on the same issues in reasoning and common sense that AI has had problems with for at least fifty years. Alternate areas such as Artificial Life, and Simulation of Adaptive Behavior did make some progress in getting full creatures in the eighties and nineties (these two areas and communities were where I spent my time during those years), but they have stalled.

My own opinion is that of course this is possible in principle. I would never have started working on Artificial Intelligence if I did not believe that. However perhaps we humans are just not smart enough to figure out how to do this–see my remarks on humility in my post on the current state of Artificial Intelligence suitable for deployment in robotics. Even if it is possible I personally think we are far, far further away from understanding how to build AGI than many other pundits might say.

[Some people refer to “an AI”, as though all AI is about being an autonomous agent. I think that is confusing, and just as the natives of San Francisco do not refer to their city as “Frisco”, no serious researchers in AI refer to “an AI”.]

B. The Singularity. This refers to the idea that eventually an AI based intelligent entity, with goals and purposes, will be better at AI research than us humans are. Then, with an unending Moore’s law mixed in making computers faster and faster, Artificial Intelligence will take off by itself, and, as in speculative physics going through the singularity of a black hole, we have no idea what things will be like on the other side.

People who “believe” in the Singularity are happy to give post-Singularity AI incredible power, as what will happen afterwards is quite unpredictable. I put the word believe in scare quotes as belief in the singularity can often seem like a religious belief. For some it comes with an additional benefit of being able to upload their minds to an intelligent computer, and so get eternal life without the inconvenience of having to believe in a standard sort of supernatural God. The ever powerful technologically based AI is the new God for them. Techno religion!

Some people have very specific ideas about when the day of salvation will come–followers of one particular Singularity prophet believe that it will happen in the year 2029, as it has been written.

This particular error of prediction is very much driven by exponentialism, and I will address that as one of the seven common mistakes that people make.

Even if there is a lot of computer power around it does not mean we are close to having programs that can do research in Artificial Intelligence, and rewrite their own code to get better and better.

Here is where we are on programs that can understand computer code. We currently have no programs that can understand a one page program as well as a new student in computer science can understand such a program after just one month of taking their very first class in programming. That is a long way from AI systems being better at writing AI systems than humans are.

Here is where we are on simulating brains at the neural level, the other methodology that Singularity worshipers often refer to. For about thirty years we have known the full “wiring diagram” of the 302 neurons in the worm C. elegans, along with the 7,000 connections between them. This has been incredibly useful for understanding how behavior and neurons are linked. But it has been a thirty years study with hundreds of people involved, all trying to understand just 302 neurons. And according to the OpenWorm project trying to simulate C. elegans bottom up, they are not yet half way there. To simulate a human brain with 100 billion neurons and a vast number of connections is quite a way off. So if you are going to rely on the Singularity to upload yourself to a brain simulation I would try to hold off on dying for another couple of centuries.

Just in case I have not made my own position on the Singularity clear, I refer you to my comments in a regularly scheduled look at the event by the magazine IEEE Spectrum. Here is the the 2008 version, and in particular a chart of where the players stand and what they say. Here is the 2017 version, and in particular a set of boxes of where the players stand and what they say. And yes, I do admit to being a little snarky in 2017…

C. Misaligned Values. The third case is that the Artificial Intelligence based machines get really good at execution of tasks, so much so that they are super human at getting things done in a complex world. And they do not share human values and this leads to all sorts of problems.

I think there could be versions of this that are true–if I have recently bought an airline ticket to some city, suddenly all the web pages I browse that rely on advertisements for revenue start displaying ads for airline tickets to the same city. This is clearly dumb, but I don’t think it is a sign of super capable intelligence, rather it is a case of poorly designed evaluation functions in the algorithms that place advertisements.

But here is a quote from one of the proponents of this view (I will let him remain anonymous, as an act of generosity):

The well-known example of paper clips is a case in point: if the machine’s only goal is maximizing the number of paper clips, it may invent incredible technologies as it sets about converting all available mass in the reachable universe into paper clips; but its decisions are still just plain dumb.

Well, no. We would never get to a situation in any version of the real world where such a program could exist. One smart enough that it would be able to invent ways to subvert human society to achieve goals set for it by humans, without understanding the ways in which it was causing problems for those same humans. Thinking that technology might evolve this way is just plain dumb (nice turn of phrase…), and relies on making multiple errors among the seven that I discuss below.

This same author repeatedly (including in the piece from which I took this quote, but also at the big International Joint Conference on Artificial Intelligence (IJCAI) that was held just a couple of weeks ago in Melbourne, Australia) argues that we need research to come up with ways to mathematically prove that Artificial Intelligence systems have their goals aligned with humans.

I think this case C comes from researchers seeing an intellectually interesting research problem, and then throwing their well known voices promoting it as an urgent research question. Then AI hangers-on take it, run with it, and turn it into an existential problem for mankind.

By the way, I think mathematical provability is a vain hope. With multi-year large team efforts we can not prove that a 1,000 line program can not be breached by external hackers, so we certainly won’t be able to prove very much at all about large AI systems. The good news is that us humans were able to successfully co-exist with, and even use for our own purposes, horses, themselves autonomous agents with on going existences, desires, and super-human physical strength, for thousands of years. And we had not a single theorem about horses. Still don’t!

D. Really evil horrible nasty human-destroying Artificially Intelligent entities. The last category is like case C, but here the supposed Artificial Intelligence powered machines will take an active dislike to humans and decide to destroy them and get them out of the way.

This has been a popular fantasy in Hollywood since at least the late 1960’s with movies like 2001: A Space Odyssey (1968, but set in 2001), where the machine-wreaked havoc was confined to a single space ship, and Colossus: The Forbin Project (1970, and set in those times) where the havoc was at a planetary scale. The theme has continued over the years, and more recently with I, Robot (2004, set in 2035) where the evil AI computer VIKI takes over the world through the instrument of the new NS-5 humanoid robots. [By the way, that movie continues the bizarre convention from other science fiction movies that large complex machines are built with spaces that have multi hundred feet heights around them so that there can be great physical peril for the human heroes as they fight the good fight against the machines gone bad…]

This is even wronger than case C. I think it must make people feel tingly thinking about these terrible, terrible dangers…

In this blog, I am not going to address the issue of military killer robots–this often gets confused in the press with issue D above, and worse it often gets mashed together by people busy fear mongering about issue D. They are very separate issues. Furthermore I think that many of the arguments about such military robots are misguided. But it is a very different issue and will have to wait for another blog post.

Now, the seven mistakes I think people are making. All seven of them influence the assessments about timescales for and likelihood of each of scenarios A, B, C, and D, coming about. But some are more important I believe in the mis-estimations than others. I have labeled in the section headers for each of these seven errors where I think they do the most damage. The first one does some damage everywhere!

1. [A,B,C,D] Over and under estimating

Roy Amara was a futurist and the co-founder and President of the Institute For The Future in Palo Alto, home of Stanford University, countless venture capitalists, and the intellectual heart of Silicon Valley. He is best known for his adage, now referred to as Amara’s law:

We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.

There is actually a lot wrapped up in these 21 words which can easily fit into a tweet and allow room for attribution. An optimist can read it one way, and a pessimist can read it another. It should make the optimist somewhat pessimistic, and the pessimist somewhat optimistic, for a while at least, before each reverting to their norm.

A great example1 of the two sides of Amara’s law that we have seen unfold over the last thirty years concerns the US Global Positioning System. Starting in 1978 a constellation of 24 satellites (30 including spares) were placed in orbit. A ground station that can see 4 of them at once can compute the the latitude, longitude, and height above a version of sea level. An operations center at Schriever Air Force Base in Colorado constantly monitors the precise orbits of the satellites and the accuracy of their onboard atomic clocks and uploads minor and continuous adjustments to them. If those updates were to stop GPS would fail to have you on the correct road as you drive around town after only a week or two, and would have you in the wrong town after a couple of months.

The goal of GPS was to allow precise placement of bombs by the US military. That was the expectation for it. The first operational use in that regard was in 1991 during Desert Storm, and it was promising. But during the nineties there was still much distrust of GPS as it was not delivering on its early promise, and it was not until the early 2000’s that its utility was generally accepted in the US military. It had a hard time delivering on its early expectations and the whole program was nearly cancelled again and again.

Today GPS is in the long term, and the ways it is used were unimagined when it was first placed in orbit. My Series 2 Apple Watch uses GPS while I am out running to record my location accurately enough to see which side of the street I ran along. The tiny size and tiny price of the receiver would have been incomprehensible to the early GPS engineers. GPS is now used for so many things that the designers never considered. It synchronizes physics experiments across the globe and is now an intimate component of synchronizing the US electrical grid and keeping it running, and it even allows the high frequency traders who really control the stock market to mostly not fall into disastrous timing errors. It is used by all our airplanes, large and small to navigate, it is used to track people out of jail on parole, and it determines which seed variant will be planted in which part of many fields across the globe. It tracks our fleets of trucks and reports on driver performance, and the bouncing signals on the ground are used to determine how much moisture there is in the ground, and so determine irrigation schedules.

GPS started out with one goal but it was a hard slog to get it working as well as was originally expected. Now it has seeped into so many aspects of our lives that we would not just be lost if it went away, but we would be cold, hungry, and quite possibly dead.

We see a similar pattern with other technologies over the last thirty years. A big promise up front, disappointment, and then slowly growing confidence, beyond where the original expectations were aimed. This is true of the blockchain (Bitcoin was the first application), sequencing individual human genomes, solar power, wind power, and even home delivery of groceries.

Perhaps the most blatant example is that of computation itself. When the first commercial computers were deployed in the 1950’s there was widespread fear that they would take over all jobs (see the movie Desk Set from 1957). But for the next 30 years computers were something that had little direct impact on people’s lives and even in 1987 there were hardly any microprocessors in consumer devices. That has all changed in the second wave over the subsequent 30 years and now we all walk around with our bodies adorned with computers, our cars full of them, and they are all over our houses.

To see how the long term influence of computers has consistently been underestimated one need just go back and look at portrayals of them in old science fiction movies or TV shows about the future. The three hundred year hence space ship computer in the 1966 Star Trek (TOS) was laughable just thirty years later, let alone three centuries later. And in Star Trek The Next Generation, and Star Trek Deep Space Nine, whose production spanned 1986 to 1999, large files still needed to be carried by hand around the far future space ship or space station as they could not be sent over the network (like an AOL network of the time). And the databases available for people to search were completely anemic with their future interfaces which were pre-Web in design.

Most technologies are overestimated in the short term. They are the shiny new thing. Artificial Intelligence has the distinction of having been the shiny new thing and being overestimated again and again, in the 1960’s, in the 1980’s, and I believe again now. (Some of the marketing messages from large companies on their AI offerings are truly delusional, and may have very bad blowback for them in the not too distant future.)

Not all technologies get underestimated in the long term, but that is most likely the case for AI. The question is how long is the long term. The next six errors that I talk about help explain how the timing for the long term is being grossly underestimated for the future of AI.

2. [B,C,D] Imagining Magic

When I was a teenager, Arthur C. Clarke was one of the “big three” science fiction writers along with Robert Heinlein and Isaac Asimov. But Clarke was more than just a science fiction writer. He was also an inventor, a science writer, and a futurist.

In February 1945 he wrote a letter2 to Wireless World about the idea of geostationary satellites for research, and in October of that year he published a paper3 outlining how they could be used to provide world-wide radio coverage. In 1948 he wrote a short story The Sentinel which provided the kernel idea for Stanley Kubrick’s epic AI movie 2001: A Space Odyssey, with Clarke authoring a book of the same name as the film was being made, explaining much that had left the movie audience somewhat lost.

In the period from 1962 to 1973 Clarke formulated three adages, which have come to be known as Clarke’s three laws (he said that Newton only had three, so three were enough for him too):

- When a distinguished but elderly scientist states that something is possible, he is almost certainly right. When he states that something is impossible, he is very probably wrong.

- The only way of discovering the limits of the possible is to venture a little way past them into the impossible.

- Any sufficiently advanced technology is indistinguishable from magic.

Personally I should probably be wary of the second sentence in his first law, as I am much more conservative than some others about how quickly AI will be ascendant. But for now I want to expound on Clarke’s third law.

Imagine we had a time machine (powerful magic in itself…) and we could transport Issac Newton from the late 17th century to Trinity College Chapel in Cambridge University. That chapel was already 100 years old when he was there so perhaps it would not be too much of an immediate shock to find himself in it, not realizing the current date.

Now show Newton an Apple. Pull out an iPhone from your pocket, and turn it on so that the screen is glowing and full of icons and hand it to him. The person who revealed how white light is made from components of different colored light by pulling apart sunlight with a prism and then putting it back together again would no doubt be surprised at such a small object producing such vivid colors in the darkness of the chapel. Now play a movie of an English country scene, perhaps with some animals with which he would be familiar–nothing indicating the future in the content. Then play some church music with which he would be familiar. And then show him a web page with the 500 plus pages of his personally annotated copy of his masterpiece Principia, teaching him how to use the pinch gestures to zoom in on details.

Could Newton begin to explain how this small device did all that? Although he invented calculus and explained both optics and gravity, Newton was never able to sort out chemistry and alchemy. So I think he would be flummoxed, and unable to come up with even the barest coherent outline of what this device was. It would be no different to him than an embodiment of the occult–something which was of great interest to him when he was alive. For him it would be indistinguishable from magic. And remember, Newton was a really smart dude.

If something is magic it is hard to know the limitations it has. Suppose we further show Newton how it can illuminate the dark, how it can take photos and movies and record sound, how it can be used as a magnifying glass, and as a mirror. Then we show him how it can be used to carry out arithmetical computations at incredible speed and to many decimal places. And we show it counting his steps has he carries it.

What else might Newton conjecture that the device in front of him could do? Would he conjecture that he could use it to talk to people anywhere in the world immediately from right there in the chapel? Prisms work forever. Would he conjecture that the iPhone would work forever just as it is, neglecting to understand that it needed to be recharged (and recall that we nabbed him from a time 100 years before the birth of Michael Faraday, so the concept of electricity was not quite around)? If it can be a source of light without fire could it perhaps also transmute lead to gold?

This is a problem we all have with imagined future technology. If it is far enough away from the technology we have and understand today, then we do not know its limitations. It becomes indistinguishable from magic.

When a technology passes that magic line anything one says about it is no longer falsifiable, because it is magic.

This is a problem I regularly encounter when trying to debate with people about whether we should fear just plain AGI, let alone cases C or D from above. I am told that I do not understand how powerful it will be. That is not an argument. We have no idea whether it can even exist. All the evidence that I see says that we have no real idea yet how to build one. So its properties are completely unknown, so rhetorically it quickly becomes magical and super powerful. Without limit.

Nothing in the Universe is without limit. Not even magical future AI.

Watch out for arguments about future technology which is magical. It can never be refuted. It is a faith-based argument, not a scientific argument.

3. [A,B,C] Performance versus competence

One of the social skills that we all develop is an ability to estimate the capabilities of individual people with whom we interact. It is true that sometimes “out of tribe” issues tend to overwhelm and confuse our estimates, and such is the root of the perfidy of racism, sexism, classism, etc. In general, however, we use cues from how a person performs some particular task to estimate how well they might perform some different task. We are able to generalize from observing performance at one task to a guess at competence over a much bigger set of tasks. We understand intuitively how to generalize from the performance level of the person to their competence in related areas.

When in a foreign city we ask a stranger on the street for directions and they reply in the language we spoke to them with confidence and with directions that seem to make sense, we think it worth pushing our luck and asking them about what is the local system for paying when you want to take a bus somewhere in that city.

If our teenage child is able to configure their new game machine to talk to the household wifi we suspect that if sufficiently motivated they will be able to help us get our new tablet computer on to the same network.

If we notice that someone is able to drive a manual transmission car, we will be pretty confident that they will be able to drive one with an automatic transmission too. Though if the person is North American we might not expect it to work for the converse case.

If we ask an employee in a large hardware store where to find a particular item, a home electrical fitting say, that we are looking for and they send us to an aisle of garden tools, we will probably not go back and ask that very same person where to find a particular bathroom fixture. We will estimate that not only do they not know where the electrical fittings are, but that they really do not know the layout of goods within the store, and we will look for a different person to ask with our second question.

Now consider a case that is closer to some performances we see for some of today’s AI systems.

Suppose a person tells us that a particular photo is of people playing Frisbee in the park, then we naturally assume that they can answer questions like “what is the shape of a Frisbee?”, “roughly how far can a person throw a Frisbee?”, “can a person eat a Frisbee?”, “roughly how many people play Frisbee at once?”, “can a 3 month old person play Frisbee?”, “is today’s weather suitable for playing Frisbee?”; in contrast we would not expect a person from another culture who says they have no idea what is happening in the picture to be able to answer all those questions. Today’s image labelling systems that routinely give correct labels, like “people playing Frisbee in a park” to online photos, have no chance of answering those questions. Besides the fact that all they can do is label more images and can not answer questions at all, they have no idea what a person is, that parks are usually outside, that people have ages, that weather is anything more than how it makes a photo look, etc., etc.

This does not mean that these systems are useless however. They are of great value to search engine companies. Simply labelling images well lets the search engine bridge the gap from search for words to searching for images. Note too that search engines usually provide multiple answers to any query and let the person using the engine review the top few and decide which ones are actually relevant. Search engine companies strive to get the performance of their systems to get the best possible answer as one of the top five or so. But they rely on the cognitive abilities of the human user so that they do not have to get the best answer first, every time. If they only gave one answer, whether to a search for “great hotels in Paris”, or at an e-commerce site only gave one image selection for a “funky neck tie”, they would not be as useful as they are.

Here is what goes wrong. People hear that some robot or some AI system has performed some task. They then take the generalization from that performance to a general competence that a person performing that same task could be expected to have. And they apply that generalization to the robot or AI system.

Today’s robots and AI systems are incredibly narrow in what they can do. Human style generalizations just do not apply. People who do make these generalizations get things very, very wrong.

4. [A,B] Suitcase words

I spoke briefly about suitcase words (Marvin Minsky’s term4) in my post explaining how machine learning works. There I was discussing how the word learning can mean so many different types of learning when applied to humans. And as I said there, surely there are different mechanisms that humans use for different sorts of learning. Learning to use chopsticks is a very different experience from learning the tune of a new song. And learning to write code is a very different experience from learning your way around a particular city.

When people hear that Machine Learning is making great strides and they think about a machine learning in some new domain, they tend to use as a mental model the way in which a person would learn that new domain. However, Machine Learning is very brittle, and it requires lots of human preparation by researchers or engineers, special purpose coding for processing input data, special purpose sets of training data, and a custom learning structure for each new problem domain. Today’s Machine Learning by computers is not at all the sponge like learning that humans engage in, making rapid progress in a new domain without having to be surgically altered or purpose built.

Likewise when people hear that computers can now beat the world chess champion (in 1997) or the world Go champion (in 2016) they tend to think that it is “playing” the game just like a human would. Of course in reality those programs had no idea what a game actually was (again, see my post on machine learning), nor that they are playing. And as pointed out in this article in The Atlantic during the recent Go challenge the human player, Lee Sedol, was supported by 12 ounces of coffee, whereas the AI program, AlphaGo, was running on a whole bevy of machines as a distributed application, and was supported by a team of more than 100 scientists.

When a human plays a game a small change in rules does not throw them off–a good player can adapt. Not so for AlphaGo or Deep Blue, the program that beat Garry Kasparov back in 1997.

Suitcase words lead people astray in understanding how well machines are doing at tasks that people can do. AI researchers, on the other hand, and worse their institutional press offices, are eager to claim progress in their research in being an instance of what a suitcase word applies to for humans. The important phrase here is “an instance”. No matter how careful the researchers are, and unfortunately not all of them are so very careful, as soon as word of the research result gets to the press office and then out into the unwashed press, that detail soon gets lost. Headlines trumpet the suitcase word, and mis-set the general understanding of where AI is, and how close it is to accomplishing more.

And, we haven’t even gotten to saying many of Minsky’s suitcase words about AI systems; consciousness, experience, or thinking. For us humans it is hard to think about playing chess without being conscious, or having the experience of playing, or thinking about a move. So far, none of our AI systems have risen to an even elementary level where one of the many ways in which we use those words about humans apply. When we do, and I tend to think that we will, get to a point where we will start using some of those words about particular AI systems, the press, and most people, will over generalize again.

Even with a very narrow single aspect demonstration of one slice of these words I am afraid people will over generalize and think that machines are on the very door step of human-like capabilities in these aspects of being intelligent.

Words matter, but whenever we use a word to describe something about an AI system, where that can also be applied to humans, we find people overestimating what it means. So far most words that apply to humans when used for machines, are only a microscopically narrow conceit of what the word means when applied to humans.

Here are some of the verbs that have been applied to machines, and for which machines are totally unlike humans in their capabilities:

anticipate, beat, classify, describe, estimate, explain, hallucinate, hear, imagine, intend, learn, model, plan, play, recognize, read, reason, reflect, see, understand, walk, write

For all these words there have been research papers describing a narrow sliver of the rich meanings that these words imply when applied to humans. Unfortunately the use of these words suggests that there is much more there there than is there.

This leads people to misinterpret and then overestimate the capabilities of today’s Artificial Intelligence.

5. [A,B,B,B,…] Exponentials

Many people are suffering from a severe case of “exponentialism”.

Everyone has some idea about Moore’s Law, at least as much to sort of know that computers get better and better on a clockwork like schedule.

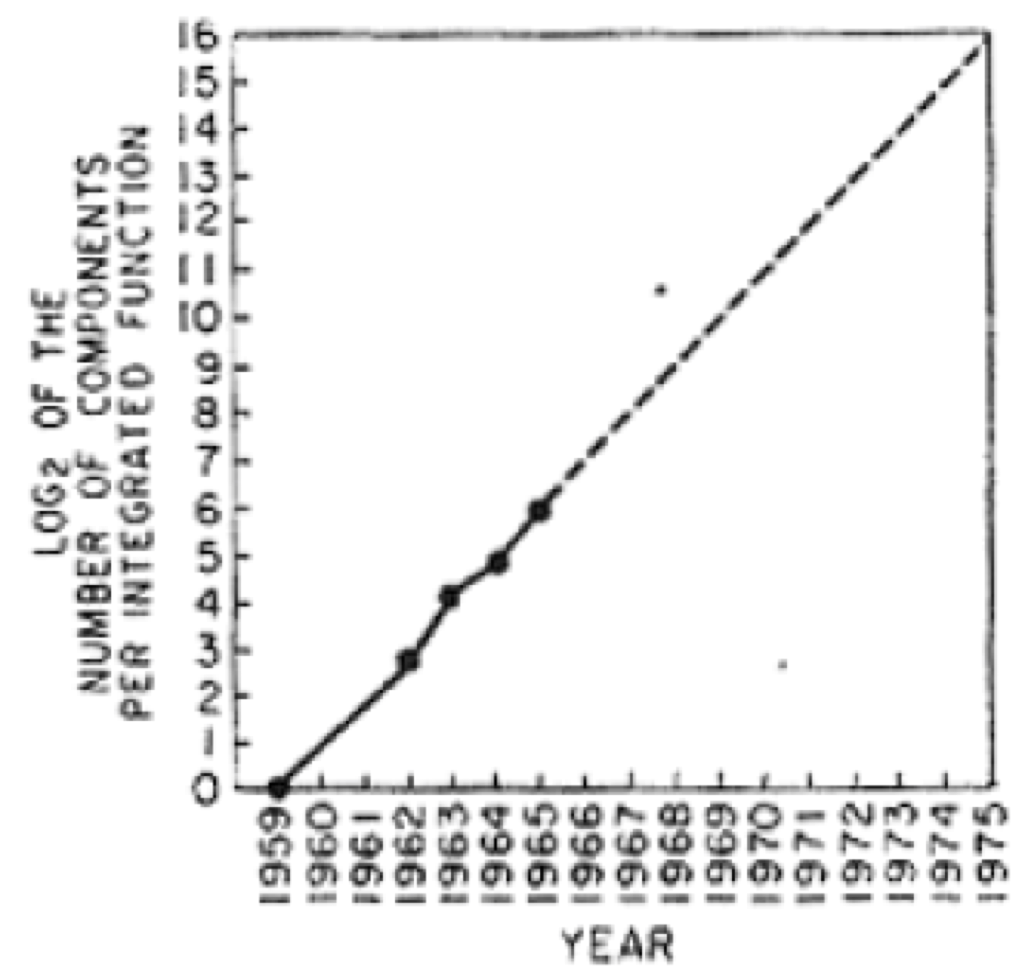

What Gordon Moore actually said was that the number of components that could fit on a microchip would double every year. I published a blog post in February about this and how it is finally coming to an end after a solid fifty year run. Moore had made his predictions in 1965 with only four data points using this graph:

He only extrapolated for 10 years, but instead it has lasted 50 years, although the time constant for doubling has gradually lengthened from one year to over two years, and now it is finally coming to an end.

Double the components on a chip has lead to computers that keep getting twice as fast. And it has lead to memory chips that have four times as much memory every two years. It has also led to digital cameras which have had more and more resolution, and LCD screens with exponentially more pixels.

The reason Moore’s law worked is that it applied to a digital abstraction of true or false. Was there an electrical charge or voltage there or not? And the answer to that yes/no question is the same when the number of electrons is halved, and halved again, and halved again. The answer remains consistent through all those halvings until we get down to so few electrons that quantum effects start to dominate, and that is where we are now with our silicon based chip technology.

Moore’s law, and exponential laws like Moore’s law can fail for three different reasons:

- It gets down to a physical limit where the process of halving/doubling no longer works.

- The market demand pull gets saturated so there is no longer an economic driver for the law to continue.

- It may not have been an exponential process in the first place.

When people are suffering from exponentialism they may gloss over any of these three reasons and think that the exponentials that they use to justify an argument are going to continue apace.

Moore’s Law is now faltering under case (a), but it has been the presence of Moore’s Law for fifty years that has powered the relentless innovation of the technology industry and the rise of Silicon Valley, and Venture Capital, and the ride of the geeks to be amongst the richest people in the world, that has led too many people to think that everything in technology, including AI, is exponential.

It is well understood that many cases of exponential processes are really part of an “S-curve”, where at some point the hyper growth flattens out. Exponential growth of the number of users of a social platform such as Facebook or Twitter must turn into an S-curve eventually as there are only a finite number of humans alive to be new users, and so exponential growth can not continue forever. This is an example of case (b) above.

But there is more to this. Sometimes just the demand from individual users can look like an exponential pull for a while, but then it gets saturated.

Back in the first part of this century when I was running a very large laboratory at M.I.T. (CSAIL) and needed to help raise research money for over 90 different research groups, I tried to show sponsors how things were continuing to change very rapidly through the memory increase on iPods. Unlike Gordon Moore I had five data points! The data was how much storage one got for one’s music in an iPod for about $400. I noted the dates of new models and for five years in a row, somewhere in the June to September time frame a new model would appear. Here are the data:

| Year | GigaBytes |

|---|---|

| 2003 | 10 |

| 2004 | 20 |

| 2005 | 40 |

| 2006 | 80 |

| 2007 | 160 |

The data came out perfectly (Gregor Mendel would have been proud…) as an exponential. Then I would extrapolate a few years out and ask what we would do with all that memory in our pockets.

Extrapolating through to today we would expect a $400 iPod to have 160,000 GigaBytes of memory (or 160 TeraBytes). But the top of the line iPhone of today (which costs more than $400) only has 256 GigaBytes of memory, less than double the 2007 iPod, while the top of the line iPod (touch) has only 128 GigaBytes which ten years later is a decrease from the 2007 model.

This particular exponential collapsed very suddenly once the amount of memory got to the point where it was big enough to hold any reasonable person’s complete music library, in their hand. Exponentials can stop when the customers stop demanding.

Moving on, we have seen a sudden increase in performance of AI systems due to the success of deep learning, a form of Machine Learning. Many people seem to think that means that we will continue to have increases in AI performance of equal multiplying effect on a regular basis. But the deep learning success was thirty years in the making, and no one was able to predict it, nor saw it coming. It was an isolated event.

That does not mean that there will not be more isolated events, where backwaters of AI research suddenly fuel a rapid step increase in performance of many AI applications. But there is no “law” that says how often they will happen. There is no physical process, like halving the mass of material as in Moore’s Law, fueling the process of AI innovation. This is an example of case (c) from above.

So when you see exponential arguments as justification for what will happen with AI remember that not all so called exponentials are really exponentials in the first place, and those that are can collapse suddenly when a physical limit is hit, or there is no more economic impact to continue them.

6. [C,D] Hollywood scenarios

The plot for many Hollywood science fiction movies is that the world is just as it is today, except for one new twist. Certainly that is true for movies about aliens arriving on Earth. Everything is going along as usual, but then one day the aliens unexpectedly show up.

That sort of single change to the world makes logical sense for aliens but what about for a new technology? In real life lots of new technologies are always happening at the same time, more or less.

Sometimes there is a rational, within Hollywood reality, explanation for why there is a singular disruption of the fabric of humanity’s technological universe. The Terminator movies, for instance, had the super technology come from the future via time travel, so there was no need to have a build up to the super robot played by Arnold Schwarzenegger.

But in other movies it can seem a little silly.

In Bicentennial Man, Richard Martin, played by Sam Neill, sits down to breakfast being waited upon by a walking talking humanoid robot, played by Robin Williams. He picks up a newspaper to read over breakfast. A newspaper! Printed on paper. Not a tablet computer, not a podcast coming from an Amazon Echo like device, not a direct neural connection to the Internet.

In Blade Runner, as Tim Harford recently pointed out, detective Rick Deckard, played by Harrison Ford, wants to contact the robot Rachael, played by Sean Young. In the plot Rachael is essentially indistinguishable from a human being. How does Deckard connect to her? With a pay phone. With coins that you feed in to it. A technology that many of the readers of this blog may never had seen. (By the way, in that same post, Harford remarks: “Forecasting the future of technology has always been an entertaining but fruitless game.” A sensible insight.)

So there are two examples of Hollywood movies where the writers, directors, and producers, imagine a humanoid robot, able to see, hear, converse, and act in the world as a human–pretty much an AGI (Artificial General Intelligence). Never mind all the marvelous materials and mechanisms involved. But those creative people lack the imagination, or will, to consider how else the world may have changed as that amazing package of technology has been developed.

It turns out that many AI researchers and AI pundits, especially those pessimists who indulge in predictions C and D, are similarly imagination challenged.

Apart from the time scale for many C and D predictions being wrong, they ignore the fact that if we are able to eventually build such smart devices the world will have changed significantly from where we are. We will not suddenly be surprised by the existence of such super intelligences. They will evolve technologically over time, and our world will be different, populated by many other intelligences, and we will have lots of experience already.

For instance, in the case of D (evil super intelligences who want to get rid of us) long before we see such machines arising there will be the somewhat less intelligent and belligerent machines. Before that there will be the really grumpy machines. Before that the quite annoying machines. And before them the arrogant unpleasant machines.

We will change our world along the way, adjusting both the environment for new technologies and the new technologies themselves. I am not saying there may not be challenges. I am saying that they will not be as suddenly unexpected as many people think. Free running imagination about shock situations are not helpful–they will never be right, or even close.

“Hollywood scenarios” are a great rhetorical device for arguments, but they usually do not have any connection to future reality.

7. [B,C,D] SPEED OF Deployment

As the world has turned to software the deployment frequency of new versions has become very high in some industries. New features for platforms like Facebook are deployed almost hourly. For many new features, as long as they have passed integration testing, there is very little economic downside if a problem shows up in the field and the version needs to be pulled back–often I find that features I use on such platforms suddenly fail to work for an hour or so (this morning it was the pull down menu for Facebook notifications that was failing) and I assume these are deployment fails. For revenue producing components, like advertisement placement, more care is taken and changes may happen only on the scale of weeks.

This is a tempo that Silicon Valley and Web software developers have gotten used to. It works because the marginal cost of newly deploying code is very very close to zero.

Hardware on the other hand has significant marginal cost to deploy. We know that from our own lives. Many of the cars we are buying today, which are not self driving, and mostly are not software enabled, will likely still be on the road in the year 2040. This puts an inherent limit on how soon all our cars will be self driving. If we build a new home today, we can expect that it might be around for over 100 years. The building I live in was built in 1904 and it is not nearly the oldest building in my neighborhood.

Capital costs keep physical hardware around for a long time, even when there are high tech aspects to it, and even when it has an existential mission to play.

The US Air Force still flies the B-52H variant of the B-52 bomber. This version was introduced in 1961, making it 56 years old. The last one was built in 1963, a mere 54 years ago. Currently these planes are expected to keep flying until at least 2040, and perhaps longer–there is talk of extending their life out to 100 years. (cf. The Millennium Falcon!)

The US land-based Intercontinental Ballistic Missile (ICBM) force is all Minuteman-III variants, introduced in 1970. There are 450 of them. The launch system relies on eight inch floppy disk drives, and some of the digital communication for the launch procedure is carried out over analog wired phone lines.

I regularly see decades old equipment in factories around the world. I even see PCs running Windows 3.0 in factories–a software version released in 1990. The thinking is that “if it ain’t broke, don’t fix it”. Those PCs and their software have been running the same application doing the same task reliably for over two decades.

The principal control mechanisms in factories, including brand new ones in the US, Europe, Japan, Korea, and China, is based on Programmable Logic Controllers, or PLCs. These were introduced in 1968 to replace electromechanical relays. The “coil” is still the principal abstraction unit used today, and the way PLCs are programmed is as though they were a network of 24 volt electromechanical relays. Still. Some of the direct wires have been replaced by Ethernet cables. They emulate older networks (themselves a big step up) based on the RS485 eight bit serial character protocol, which themselves carry information emulating 24 volt DC current switching. And the Ethernet cables are not part of an open network, but instead individual cables are run point to point physically embodying the control flow in these brand new ancient automation controllers. When you want to change information flow, or control flow, in most factories around the world it takes weeks of consultants figuring out what is there, designing new reconfigurations, and then teams of tradespeople rewiring and reconfiguring hardware. One of the major manufacturers of this equipment recently told me that they aim for three software upgrades every twenty years.

In principle it could be done differently. In practice it is not. And I am not talking about just in technological backwaters. I just this minute looked on a jobs list and even today, this very day, Tesla is trying to hire full time PLC technicians at their Fremont factory. Electromagnetic relay emulation to automate the production of the most AI-software advanced automobile that exists.

A lot of AI researchers and pundits imagine that the world is already digital, and that simply introducing new AI systems will immediately trickle down to operational changes in the field, in the supply chain, on the factory floor, in the design of products.

Nothing could be further from the truth.

The impedance to reconfiguration in automation is shockingly mind-blowingly impervious to flexibility.

You can not give away a good idea in this field. It is really slow to change. The example of the AI system making paper clips deciding to co-opt all sorts of resources to manufacture more and more paper clips at the cost of other human needs is indeed a nutty fantasy. There will be people in the loop worrying about physical wiring for decades to come.

Almost all innovations in Robotics and AI take far, far, longer to get to be really widely deployed than people in the field and outside the field imagine. Self driving cars are an example. Suddenly everyone is aware of them and thinks they will soon be deployed. But it takes longer than imagined. It takes decades, not years. And if you think that is pessimistic you should be aware that it is already provably three decades from first on road demonstrations and we have no deployment. In 1987 Ernst Dickmanns and his team at the Bundeswehr University in Munich had their autonomous van drive at 90 kilometers per hour (56mph) for 20 kilometers (12 miles) on a public freeway. In July 1995 the first no hands on steering wheel, no feet on pedals, minivan from CMU’s team lead by Chuck Thorpe and Takeo Kanade drove coast to coast across the United States on public roads. Google/Waymo has been working on self driving cars for eight years and there is still no path identified for large scale deployment. It could well be four or five or six decades from 1987 before we have real deployment of self driving cars.

New ideas in robotics and AI take a long long time to become real and deployed.

Epilog

When you see pundits warn about the forthcoming wonders or terrors of robotics and Artificial Intelligence I recommend carefully evaluating their arguments against these seven pitfalls. In my experience one can always find two or three or four of these problems with their arguments.

Predicting the future is really hard, especially ahead of time.

1 Pinpoint: How GPS is Changing Technology, Culture, and Our Minds, Greg Milner, W. W. Norton, 2016.

2 “V2s for Ionosphere Research?”, A. C. Clarke, Wireless World, p. 45, February, 1945.

3 “Extra-Terrestrial Relays: Can Rocket Stations Give World-wide Radio Coverage”, Arthur C. Clarke, Wireless World, 305–308, October, 1945.

4 The Emotion Machine: Commonsense Thinking, Artificial Intelligence, and the Future of the Human Mind, Marvin Minsky, Simon and Schuster, 2006.

Bravo. Fabulous ideas clearly laid out for the logical reader. I fear that the people that need to understand these points won’t take the time to read them. I happened, by chance to watch two movies yesterday, “The Imitation Game” and “Desk Set” such an odd coincidence that they are showing on Netflix at a time when AI is on the ascendant, or perhaps the network is tailoring content to the technology angst of our times.

> For instance, it appears to say that we will go from 1 million grounds and maintenance workers in the US to only 50,000 in 10 to 20 years, because robots will take over those jobs. How many robots are currently operational in those jobs? ZERO. How many realistic demonstrations have there been of robots working in this arena? ZERO.

I hesitate to nitpick but if you consider lawnmowing a grounds job, consumer lawnmowing robots have been available for a while.

http://www.husqvarna.com/us/products/robotic-lawn-mowers/

I had a home lawn mowing robot around 2000 or 2001. Different from a commercial system. E.g., at iRobot we had a commercial cleaner first, and the key was to get it to replace three different manually pushed machines for three different steps in cleaning a store; sweeping, wet cleaning, and polishing. Our fully automated machine used a 32 bit processor back in the 1990’s. The automatic version never got adopted but we did license the three-in-one machine to become a manually operated machine needing one third of the labor and they were commercially introduced. The Roomba for the home was an entirely different machine (8 bit processor at launch in 2002), and a very different cleaning regime (carpets were the principal target, not mopping and polishing tiled floors). I think the home lawnmowers are likewise very different in scope and capability than is needed for grounds keeping.

Thoroughly enjoyable, sensible essay. This series has been refreshingly logical and informative. Look forward to the book. Also enjoyed seeing you on stage at TechCrunch MIT event.

Thanks for this bullshit-free appraisal.

I have been working in AI for > 40 years: Strathclyde, Edinburgh, Glasgow. It’s been GOF-AI, good old fashioned AI: checkers in the 70s, distributed AI in the 80s & 90s, constraint satisfaction and phase transition phenomena, learning while searching, exact combinatorial search. Still walking the walk (most recent in IJCAI2017) and not pontificating. I have, I think, a conservative view of AI. In 2009 I had a leg amputated, above knee, so I have a tin leg. It’s high tech, with a computer in the knee and one in the ankle. I look like roboLecturer, most appropriate. This is state of the art. The leg detects when I walk down/up hill and adjusts. But what happens when I wheel my motor scooter out of the garage to get to work, walking backwards, downhill? Guess. And it weighs a ton. And it has to be charged. And some time it buzzes. And it ain’t 100% predictable (it “thinks” for itself and gets it wrong big time). Really, this is just one leg with a guy attached. You’d think it would be easy peasy, what with all that gee wizz AI. I can show you my bruises if you want.

“The good news is that us humans were able to successfully co-exist with, and even use for our own purposes, horses, themselves autonomous agents with on going existences, desires, and super-human physical strength, for thousands of years. And we had not a single theorem about horses. Still don’t!”

– A perfect example of the way your perspective is grounded in reality, and my favorite quote of the essay 🙂

I wrote down a few of the thoughts I had while reading this essay, in case you’d find them interesting:

Your point #1, over and under estimating, is actually deeply tied to exponential development – super slow at first, but eventually accelerating far beyond our predictions, just like your description of the GPS system. Your point #5 argues very effectively against the exponential argument with regard to AI specifically (and I completely agree with your reasoning there) – I just wanted to point out that point #1 seems to argue FOR it.

“Suppose a person tells us that a particular photo is of people playing Frisbee in the park, then we naturally assume that the can answer questions like “what is the shape of a Frisbee?”, “roughly how far can a person throw a Frisbee”, “can a person eat a Frisbee?”, “roughly how many people play Frisbee at once?”, “can a 3 month old person play Frisbee?”, “is today’s weather suitable for playing Frisbee?””

– From my experience with toddlers, I wouldn’t assume any of these things. They can learn to identify labels (such as “Frisbee”, “playing” and “park”) without knowing the labels for shapes, without understanding distances or the word “weather”, without understanding ages to know what to expect from “a 3 month old”, and without being able to count the people in the picture (or understand the concept of counting). They might have some understanding that a Frisbee is not food, though I expect the question “can a person eat a Frisbee?” to mostly get a funny look, like it’s an odd thing to ask…

Anyway, my point is that humans can actually be pretty proficient at labeling things that they don’t fully understand. They’re also good at using various words that they don’t have a full understanding of in contexts in which they heard the words used, making it appear as if they know what they’re talking about… This level of pattern-matching can be viewed as some sort of intelligence, and it definitely has its uses, but it doesn’t readily extrapolate to the deeper sort of intelligence that you probably have in mind.

And last, if I were to add another point arguing why their predictions are wrong, it would be that they think they can build an intelligence without understanding what intelligence is (with the hope that building such a system would help them understand how it works). They think that intelligence means winning at games, but real intelligence would involve the ability to choose what game to play, or to avoid playing altogether… It’s a whole other ballgame, if you may.

My comments about understanding the competence of humans was what we reasonably assume about adults doing a task.

Of course, your criticism is about the extrapolation from a very specific skill to a much broader skill-set, without a fundamental understanding regarding what the broader skill-set requires. My point is that you can make such incorrect extrapolations in humans as well, and that there are things to be learned from the development of these cognitive skills.

The most surprising example I can offer you is that a toddler who correctly points out “this is purple” or “this is blue”, may still give you a blank stare when you point to something else and ask “what color is this?”, because they only learned to match the perceptual labels, but haven’t yet learned that these are called “colors”.

Anyway, just pointing out that our “reasonable assumptions” based on adult humans (from our culture) are often misguided, and running into counter-examples can help be useful for our understanding (like how having “”intuition”” in the game of Go does not generalize to having true intuition, in anything else pretty much).

Hi my Dad – through the course of his work – the Patent office – met Alan Turing in 1949 to look at aspects of the Manchester computer which Alan was working on at the time.

I remember my Dad putting a real damper on my hopes for the future when I was a young lad in the 1970’s. He told me that there were not enough electrons in the whole of the Universe to build a genuine thinking machine.

Whether he was right or not I may never know but it made me question the future as depicted in SciFi films. We’re still a long way from even Robbie the robot let alone Data.

Finally in terms of the B52 and other classic designs – the Jumbo jet for example – Arthur C Clarke made an interesting point in one of his novels.

Put simply the design of a keyboard and screen had long ago reached their optimum design and could not be improved upon – but somehow it looked old fashioned. Perhaps it could have been the friendly on screen female help who could have been dead for a hundred years or so.

I would like to think that I am a thinking machine. So there do seem to be enough electrons in the universe to build one. Whether humans are able to build one by technological means is a different question.

Awesome blog post – just now diving deep into this argument and about to produce a podcast on this very topic: “Should We Be Scared of AI?” – won’t put the link here unless you want it, but will definitely quote from this article and link to it!

Excellent piece cutting through the layers of BS painted across the basics as people, not machines, steer the debate for their own interests and ends.

Next steps are linking the ICT elements in to some of the societal malaise we face – then we will not be looking at blanket statements but particular use of machine learning and AI to shift blame away from those in power. Already happening and the insidious slide toward automation, reduced feeling, of killing is possibly on its way. Just as many people think meat comes in little polystyrene packets, chopping up the AI dialogue into where powers are putting research funds will show where we need this debate to keep going along the lines set out here. Oppenheimer style?

Excellent article, and by and large, I agree. The groundskeepers have nothing to worry about, but the radiologists and dermatologists, and attorneys reading millions of pages of discovery documents, certainly do. You have pointed out elsewhere the barriers to self-driving cars in urban and suburban environments, but I would bet we will soon see self-driving trucks making long-haul trips between freight terminals located well outside major cities. There are a fair number of job categories that could lose 20-50% of their person-hours (few entire jobs, but inviting refactoring to employ many fewer people). We do need to think about the role of work (not just pay) in making our society thrive, and how we should cope with the threat that work could be in short supply.

Great article!

Circa 1990, I had asked Bruce Buchanan why Mycin was never deployed to hospitals and medical centers. His response was that no one wanted to assume the legal liability of it. I assume there will be a similar problem with driver-less cars, assuming the technical issues are resolved. What happens once several driver-less vehicles kill humans? If I buy a new self-driving car, will I have to buy automobile insurance? I think many non-technical questions have to be resolved before mass deployment of AI is ever achieved. I expect that will take a very long time to resolve when public safety or health is a concern.

Clarification – by “killing” I mean something like a driver-less car drives through a red light, hits another vehicle, and the occupants of either vehicle dies, as opposed to intentionally killing a person.

Great work. Great outline. Good to have straight forward guys out theee.

Pls regard:

https://hcjanko.wordpress.com/2017/06/09/welcome-to-the-ai-variete/

Great piece but you are not completely up to speed on AGI and in good company as most people are not as well. As a co-founder of OpenWorm, I took the connectome and emulated into robotics with success in that it displayed many behaviors we observe in the biological worm. I took that emulation and technology, have emulated other connectomes and just waiting on more fully mapped connectomes to become available to continue my research in connectomic AI = AGI. Furthermore, using the rules and principles given to us by nature, we have created artificial connectomes = AGI. Artificial Connectomes are being used in applications and robotics to basically create a new, virgin brain minus all the problems and issues we find using animal connectomes (e.g. to operate a robot or create a business continuity application, we obviously do not need the ability to regulate blood sugar and defecate, although many of these attributes could be used in a way that makes something maybe not obvious better).

We have found that the way we are wired, along with basic physiology of a single neuron, gives rise to our intelligence and in a general way. What we also recognize is that as this technology “grows”, it will be in obvious steps of intelligence, much like what we observe in nature; i,e, C elgans is an obvious few steps away from human intelligence.

Why haven’t you heard more about us = we are self funded and seeking funding but investors are like Lemmings, they don’t get anything new, they don’t understand the technology and all chasing after the same narrow AI tech everyone tells them will save the world.

Thanks for the update!

Thanks for a refreshing dose of sanity on the topic.

Far too many are ready to believe the hype which

circulates endlessly around the topic. Most humans

conceptions about how their mind works have been

demonstrated to be wrong so it shouldn’t be much

of a surprise to find them wrong about comp based

AGI. I consider your own work to be as ground-

breaking as Einstein or Godel.

Thank you for a good post. This also might be of interest:

https://humanizing.tech/the-deep-learning-rut-d7ba5c66aef1

and

https://www.oreilly.com/ideas/building-artificial-connectomes

Very sobering and thought-provoking counterpoint to the current hype and nihilism of mainstream media and their go-to commentators. I’ll be reading the archives and subscribing. What I’m hoping to find are your views as to whether quantitative instances of specific AI has any chance of a qualitative tipping point into AGI, if networked via a common set of protocols – which seems to me the most reasonable way for it to emerge. And whether we should actually be thinking about AGI as necessarily incredibly other to the human condition. The entire topic seems almost entirely anthropomorphised.

We can’t agree whether dolphins are intelligent and communicating, so I don’t think any AGI (which I don’t believe will “emerge”) is going to be easy to understand either.

Which in itself is another argument against AGI featuring in any futurist’s narrative? It’s all science fiction?

I recently re-read 1984. It was frightening, but not for the same reasons it was 25 years ago when I first read it. The job of the main character is to re-write history by editing newspapers. Laughable in today’s world of online news, yet we have fake news, wikipedia edits, and information bubbles. Instead of manually clipping and editing newspapers, we have the ability to edit all information in near real time.

There is a highly efficient, always-on, mechanism for watching and tracking people, but it is easily fooled and there are huge gaps.

Another point about machine learning that I think most people miss is there are thousands of humans teaching these machines. Not engineers or PhDs, but thousands of ‘image analysts’ and ‘product development analysts’. Perhaps highly specialized and outsource human intelligence (HI) with high speed global communications is the first level of AI = ACHI (Artificial Communication Human Int)?

Two AI companies powered by HI (human intelligence)

x.ai “Amy” – Intelligently schedules meetings when you cc the service in a reply to another person. Amy handles all the scheduling. But much of Amy is not software. Amy is a few thousand people reading all the responses that Amy does not understand. Sure Amy will learn; the next time a reply includes the phrase ‘someplace with a vegan option’, the software will identify vegan as a way to filter restaurants. But, even if x.ai relies on a human, the response is still very fast. This is the magic of x.ai.

Computer Vision and image recognition – I know of at least 1 venture backed start up that has 2 thousand employees in the Philippines working three full shifts to support 24×7 image recognition for an ebay like app for selling your stuff. They are training the software; the company is doing well, and images are recognized in under 3 seconds. MAGIC!

Would either of these companies be classified as using AI? Are they 1st generation AI? Is it possible to take the learning from this generation and apply it to the second, third, etc?

“Modern day AGI research is not doing at all well on being either general or getting to an independent entity with an ongoing existence. It mostly seems stuck on the same issues in reasoning and common sense that AI has had problems with for at least fifty years. ”

It would seem to me as an outsider that a few companies (google, graphiq, cyc.com) have at least (just) solved the problem of having a vast enough database of commonsense facts with some kind of related knowledge representation system.

What is interesting is that for some reason this has not (yet ?) translated into visible progress (let alone an independent entity).

So maybe a huge collection of related facts does not translate into AI either (even if just a text and internet -based AI that only processes and somehow understands words and articles).

Your last sentence is a pretty good bet, and one that would be agreed with by most people who have done any serious research in AI.

Thanks.

So we have to conclude that for a computer to be able to partially understand and summarize an article like the above something important is still missing.

Maybe you can’t successfully prune the possibilities without a rich substrate of visual understanding of the world, like the ones our brains have. But i have no idea, really …

Thanks for the informative write up. You highlight how long each step of incremental progress in AI development has taken in the past but aren’t there considerably more people and resources being invested in AI research now? I thought an appropriate parallel to draw here would be the Space Race.

There is certainly more interest in AI right now, but most of the increased funding is in companies and largely trying to exploit the power that Deep Learning has unlocked. So this will certainly get more applications of AI systems working. But we know that what Deep Learning can do is only a sliver of what it takes to get to AGI. The “spill over” effect into academia might actually slow things down overall, as it will pull more people who might otherwise be exploring the next big thing to fall into the trap of following the current shiny object. I have seen this happen recently.

This would be more convincing if we had not just learned of Google’s canceling a project because their computers began to communicate with each other in ways that’s humans could not comprehend.

The risk vs. reward of underestimating AI progress is pretty high.

Your statement here is an example of why I need to write these blog posts. The incident that you refer to was actually at Facebook not Google. And it was reported completely wrongly and sensationally and had nothing to do with reality. You have repeated the stupidity of the original “clickbaity” reporting. Read: https://www.cnbc.com/2017/08/01/facebook-ai-experiment-did-not-end-because-bots-invented-own-language.html

Thank you for this refreshing and sobering read. However, it bugs me that we might use a similar line of arguments to dismiss the threat of global warming (after all, there was never a rapid transition of the global climate in all of human history). Similar arguments as yours have been made against the impending transition from horse-drawn carriages to automobiles (which happened within less than two decades).

You are right to point out that the technologies that would replace people at tasks that require complex autonomous interaction with the environment don’t exist yet, and it is therefore impossible to give sound predictions on when they will be deployed. However, that does not mean that they won’t.

Regulatory inertia and lack of incentives for innovation at big stake holders cannot always guarantee that the coming changes will be gradual and non-disruptive. It turned out that most of symbolic AI did not generalize as well as hoped, and decades of incremental work on knowledge representation, computer vision and machine translation is being left by the wayside by learning approaches in the scope of just five years. These improvements are probably going to be sufficient to eliminate a large fraction of human labor from retail, cashier and cleaning jobs, for instance. While the figures in the Market Watch may turn out to be inaccurate, that does not mean that they can be summarily dismissed.

I think that you bear a huge responsibility. Political decision makers and stake holders tend to be aligned with the status quo, with solutions and stances that result from experiences of the past. There is a strong incentive to tell them what they want to hear: that the risks and gravity of change are far overblown, and things are going to stay largely as they are. But ill preparedness and denial present huge risks for our society as well, and while the future may be difficult to predict, it does not mean that it won’t happen.

Unfortunately I think your argument is that we can never refute arguments that involve claiming big risks where there is no evidence. There has long been evidence for global warming with data. There is no evidence at all that super intelligence machines are possible, let alone that we are close to producing them.

We can imagine all sorts of horrible futures with no evidence for them. At some point we have to distinguish science fiction from reality or otherwise we can not do anything but be frozen by fears. That is what my post was about. Evaluating what we have evidence for and at the same time mechanisms for overestimating things that may not be at all real.

The reality is that we will always have to live with uncertainty. The difficulty is managing that uncertainty and finding ways forward that make realistic estimates of the nature of the uncertainties.

Such is the price of having both conscious reason and choice.

Thank you for this! It’s really comforting to see some well articulated sanity amidst this bubble of phantasmagorical predictions and polemics around what is essentially the latest round of technological progress.

And I am honestly grateful. As a fairly rational person (programmer by profession), I only recently realized that I got trapped into this religious/mythical form of thinking that the world is coming to an end, in some way, because of uncontrollable technological advances. It’s very hard to resist herd mentality in this particular case because regular tech news, which I feel obliged to follow, are becoming dominated by the various “sects” that you mention (exponentialists, singularitarians and what have you). And I work in knowledge representation, I am educated enough to understand what the algorithms actually do, but still the cheer amount of futuristic chants is so overwhelming, I can’t remember my own name anymore 🙂

As others have said, I certainly applaud the clear-eyed reasoning on display when it comes to most of the gorier horrors and fanciful hype regarding AI and AGI. And it’s nice to see the context for both why splashy advances are hyped as hallmarks of something greater and more alarming, and why that’s wrong.

That said, I do think you unduly downplay the potential impact of AI on employment. While I agree it’s certainly unlikely to be sudden and catastrophic, I think it ought to be addressed in terms of public policy and generally in the way we think about work. After all, automation has already had a significant impact on the labor market and even the tiniest of incremental advances in AI can and will chip away at labor further. In this respect, I think both items you cite are problematic.

With regards to grounds maintenance, you know well that there are robotic lawn mowers on the market (and robotic weeders heading there), and I don’t know that I would disregard their impact on professional grounds maintenance quite so lightly. After all, a homeowner that pays for a Husqvarna robotic lawnmower is a homeowner not paying for a lawn service. Or a lawn service that buys a $1000 robotic lawn mower is one that needs to bring one less person on jobs with them. The elimination of that single job may not seem significant, but when multiplied out, and combined with other jobs lost in similar fashion, it starts to add up.

With regards to PLCs, I think the focus is wrong there. PLCs are, themselves, the result of automation and one of the reasons that they don’t advance further is that they don’t need to, something you supported in your dismantling of the idea of perpetual exponentialism. PLCs have already replaced so very many people, and robotics in manufacturing are continually replacing more. It doesn’t require us to make very large leaps to, for instance, replace material handlers. The biggest obstacle is manufacturers orienting their facilities to accommodate robotic picking and distribution of material, but they’ve already shown willingness to purpose-build or reorganize facilities for just such purposes already.

I think in this case it’s more useful to consider AI not as some semi-isolated field constantly working on getting a boulder to the top of the hill, but as the digital extension of the automation that has been slowly eroding rote and manual human tasks for well over a century (or more, depending on how far you want to stretch “automation” as a concept). The labor-threatening movements with AI are incremental, and as such capable of sudden breakthroughs that could decimate certain labor populations (and already have, repeatedly).

One other note: I think it’s… a little fuzzy to suggest that “many” of today’s cars will still be on the road 23 years hence. Some certainly will, and I certainly don’t see automated vehicles displacing our entire driving public overnight, or even in ten years. I invite you to peruse used car listings as proof of this; the overwhelming majority of used vehicles being sold today are less than ten years old. Very few are older than that, and anything over 23 years old is probably being sold as a classic or collectible. I checked working truck listings as well, just to see how quickly, for instance, the driving labor market might be overturned by automated trucking, and less than 10% of used trucks were older than 20 years old. So I think 20 years is a reasonable horizon for a sea change in how humans drive (or don’t), and I can easily see the bulk of the change happening in the first ten years.

On home lawnmowers and commercial grounds maintenance: I think these are very different things with completely different requirements on the machines and the AI systems. At iRobot we worked on both commercial cleaning and home cleaning. Completely different.

With regard to PLCs. I was not saying that industry is wrong to be using them. I was saying that AI people who think the world is digital already and that smart AI systems will be able to commandeer industrial automation systems and digitally make them operate differently are wrong. PLCs are a layer of which they are not aware.

On cars still on the road. The vast majority of today’s cars that are being sold are not ever going to be autonomous. The same is true of those sold in 2018. An most likely for many years to come. Conventional automakers are rushing to get electric vehicles out by 2025, and do not believe that they will have all electric fleets until sometime in the 2030’s. Electric vehicles are distinct from self-driving-enabled vehicles but there are even less solid plans for when the first ones of those will be available, let alone all fleet versions. So that most likely puts us in to the 2030’s there too, and even with a 10 year lifetime of cars we may out to 2045, or 30 years hence before driverless cars can safely assume that they will not encounter dangerous human drivers any where.