On January 1st, 2018, I made predictions about self driving cars, Artificial Intelligence, machine learning, and robotics, and about progress in the space industry. Those predictions had dates attached to them for 32 years up through January 1st, 2050.

I made my predictions because at the time I saw an immense amount of hype about these three topics, and the general press and public drawing conclusions about all sorts of things they feared (e.g., truck driving jobs about to disappear, all manual labor of humans about to disappear) or desired (e.g., safe roads about to come into existence, a safe haven for humans on Mars about to start developing) being imminent. My predictions, with dates attached to them, were meant to slow down those expectations, and inject some reality into what I saw as irrational exuberance.

I was accused of being a pessimist, but I viewed what I was saying as being a realist. Today, I am starting to think that I too, reacted to all the hype, and was overly optimistic in some of my predictions. My current belief is that things will go, overall, even slower than I thought four years ago today. That is not to say that there has not been great progress in all three fields, but it has not been as overwhelmingly inevitable as the tech zeitgeist thought on January 1st, 2018.

As part of self certifying the seriousness of my predictions I promised to review them, as made on January 1st, 2018, every following January 1st for 32 years, the span of the predictions, to see how accurate they were. This is my fourth annual review and self appraisal, following those of 2019, 2020, and 2021. I am an eighth of the way there! Sometimes I throw in a new side prediction in these review notes.

The biggest news across these three fields this year is what appears to be a breakthrough into space tourism. For a few minutes at one point this year there were 19 people weightless in space at the same time and 8 of them were not professional astronauts. That happened on December 11th. There were six people aboard Blue Origin’s New Shepherd in a sub-orbital flight, three Chinese astronauts on the Chinese Tiangong space station, seven regular crew members on the International Space Station (three from the US, two from Russia, and one each from Japan and the European Space agency), and three visitors to that same station, one a professional Russian astronaut, accompanying a Japanese billionaire and his publicity person. As we will see below there were two other orbital flights and three other sub-orbital flights with tourists on board in 2021.

UPDATE of 2019’s Explanation of Annotations

As I said in 2018, I am not going to edit my original post, linked above, at all, even though I see there are a few typos still lurking in it. Instead I have copied the three tables of predictions below from 2021’s update post, and have simply added a total of twelve comments to the fourth columns of the three tables. As with last year I have highlighted dates in column two where the time they refer to has arrived.

I tag each comment in the fourth column with a Cyan (#00ffff) colored date tag in the form yyyymmdd such as 20190603 for June 3rd, 2019. In 2022 I have started highlighting the new text put in for the current year in LemonChiffon (#fffacd) so that it is easy to pick out this year’s updates.

The entries that I put in the second column of each table, titled “Date” in each case, back on January 1st of 2018, have the following forms:

NIML meaning “Not In My Lifetime, i.e., not until beyond December 31st, 2049, the last day of the first half of the 21st century.

NET some date, meaning “No Earlier Than” that date.

BY some date, meaning “By” that date.

Sometimes I gave both a NET and a BY for a single prediction, establishing a window in which I believe it will happen.

For now I am coloring those statements when it can be determined already whether I was correct or not.

I have started using LawnGreen (#7cfc00) for those predictions which were entirely accurate. For instance a BY 2018 can be colored green if the predicted thing did happen in 2018, as can a NET 2019 if it did not happen in 2018 or earlier. There are five predictions now colored green, the same ones as last year, with no new ones in January 2020.

I will color dates Tomato (#ff6347) if I was too pessimistic about them. Last year I colored one date Tomato. If something happens that I said NIML, for instance, then it would go Tomato, or if in 2020 something already had happened that I said NET 2021, then that too would have gone Tomato.

If I was too optimistic about something, e.g., if I had said BY 2018, and it hadn’t yet happened, then I would color it DeepSkyBlue (#00bfff). None of these yet either. And eventually if there are NETs that went green, but years later have still not come to pass I may start coloring them LightSkyBlue (#87cefa). I did that below for one prediction in self driving cars this year.

In summary then: Green splashes mean I got things exactly right. Red means provably wrong and that I was too pessimistic. And blueness will mean that I was overly optimistic.

So now, here are the updated tables.

Self Driving Cars

Spoiler alert: very little movement in deployment of actual, for real, self driving cars.

Way back four years ago when I made my predictions about “self driving cars” that term meant that the cars drove themselves, and that there was no one in the loop at a company office, or following in a chase car, or sitting in the drive or passenger seat ready to take over or punch a big red button. As I documented in last year’s update the AV company’s conveniently neglect to mention these uncomfortable truths when they give press releases about their great progress. Even tech savvy people do not realize how very far all the breathless press stories about self driving cars being deployed are from being accurate about what is really going on. Last year I gave nine questions that every reporter should ask at any announcement by an AV car company. I have started seeing some similar questions being asked by more savvy reporters this last year. I have no idea whether any of them read my blog.

As an example of how companies gloss ofter things, if you carefully watch Waymo’s breathless “ooh” and “ah” filled video about their first deployment in San Francisco you will occasionally see the hands and knees of the safety driver sitting in the driver’s seat, conveniently unmentioned in the video that is trying to give the impression that their service is deployed. If you carefully read about the Chandler, Arizona, deployment and watch videos from there you will see how there is constant contact with people watching from home base, there are rescue vehicles that come and deposit a human driver to take over in some cases, and most importantly that the scale of the number of rides they give per week is tiny, way too few to be anything but a giant cash drain. That is two years after it was announced that the service was real and deployed. Nope. Still very early days.

And (h/t Mohamed Amer) here is Cruise(GM)’s very first driverless pickup less that two months ago, in San Francisco. Note that the passenger has two Cruise employees talking to him through the car. The passenger is also a Cruise employee, and notes that he was not allowed by Cruise to bring his three year old along for the ride. And there is a camera person at both the pick up and drop off location. No indication of how far the vehicle drove driverless before or after this ride, nor whether there is a chase vehicle (with a safety person on board ready to stop the driverless vehicle) or not, nor whether it could operate at any other pick up or drop off location (Steve Jobs’ demos of new Apple technology were famously scripted down to every key press as otherwise he would hit some bug or the other). This is an important step towards real deployment, but it is a long long way from real deployment.

The Hubris Of Self Driving Cars

In fact driverless cars, with safer roads, have been predicted again and again, for over 80 years, and it is always just around the corner. I recommend the book Autonorama by Peter Norton for some balance to the recent hype that really, really, autonomous cars are just around the corner once again. It will make many techno people uncomfortable and even angry. But it is good to have your internal beliefs challenged once in a while.

Norton points out that many companies have talked up the imminence of autonomous cars for a long time. Here is the list he documents for one such company, GM (which bought Cruise in 2016):

- 1939 World’s Fair at their Futurama exhibit, GM promised autonomous vehicles by 1960.

- 1964 World’s Fair in Futurama II, GM promised it again.

- 2010 GM and SAIC (Shanghai Automotive Industry Corporation) promised that it would arrive by 2030 as Xing! (autonomous Shanghai)

- 2017 GM 2017 Sustainability Report: Zero Crashes. Zero Emissions. Zero Congestion.

All worthy goals, but worthy goals doesn’t mean we yet no how to do it. We certainly didn’t in 1939 and 1964. And we certainly didn’t know how to do it when Waymo started (inside Google) back in January 2009 (thirteen years ago and counting…).

We have seen the long tail of difficult situations delay deployments for years. We know that humans can drive cars with “only” 35,000 annual fatalities just in the United States. We don’t know yet whether any of the sensor suites from any of the companies (all are different from the capabilities of human eyes) are sufficient to reach that goal or whether the presence of significant numbers of AVs on roads shared with humans will so impact the dynamics of driving that things get much worse. We can have high faluting goals of zero this and zero that, but we actually have no idea whether the real world will allow that to happen without significant changes in approach. WE JUST DON’T KNOW YET.

What we do know is that human drivers have many different capabilities, perceptual, reasoning, and an apparent ability to model what is happening and make predictions. Pure learning approaches are unlikely to capture that capability, so systems based largely on learning are making a big unproven bet. That hasn’t stopped massive funding going into two new startups in the last year, one for cars (Wayve) and one for trucks (Waabi) that try to solve the whole problem from pretty much a pure learning perspective. Didn’t we see this movie before? (Again, thanks to Mohamed Amer for pointing me at these two.)

Industry Misses

I believe that the hubris surrounding self driving cars has lead to delays in actual safety progress we could have already deployed if we had not been so distracted. I talk about this in the context of my own hubris after the table of the state of my predictions just below.

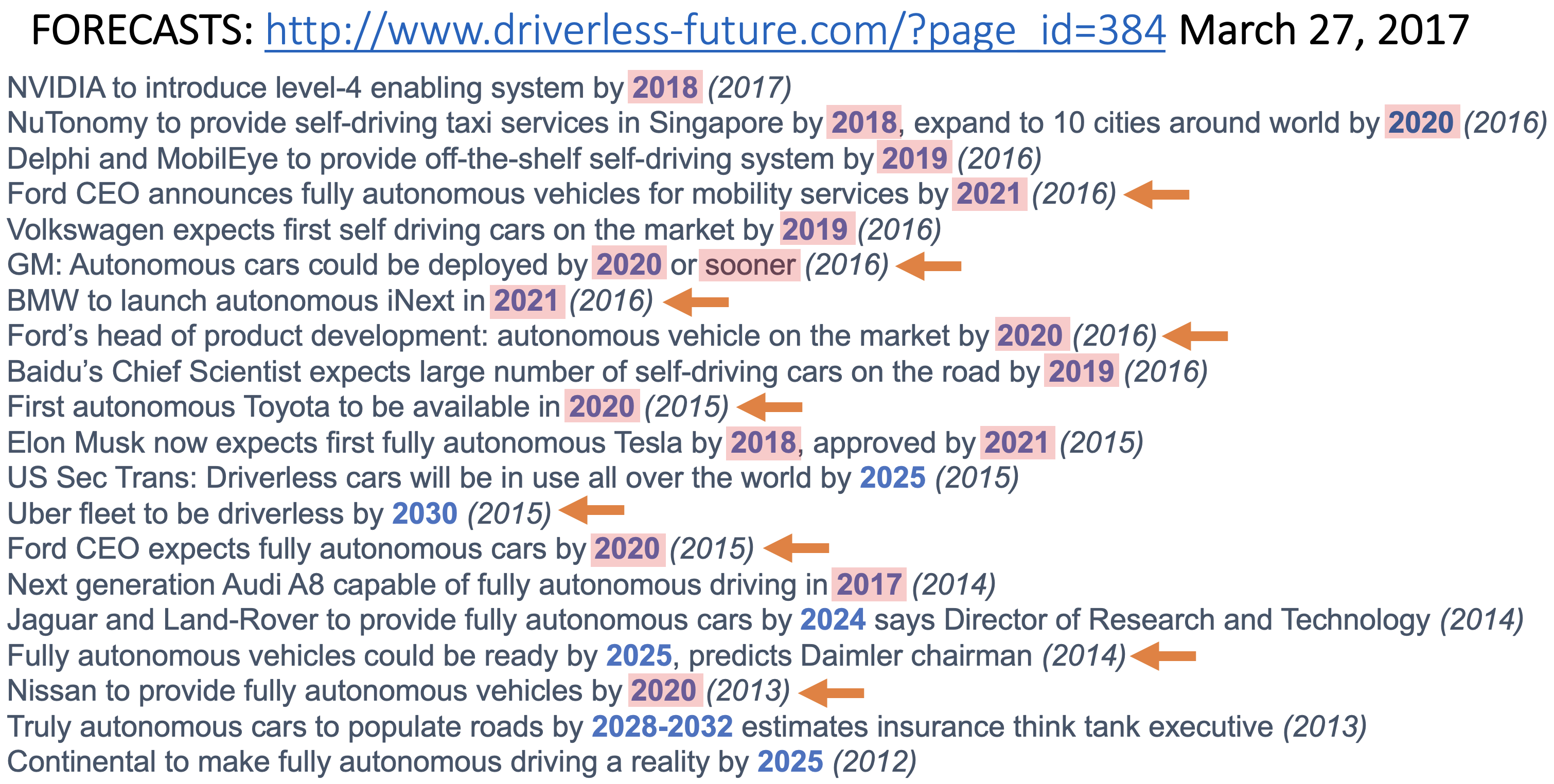

But first I will once again present a summary of predictions made by industry leaders that I scraped from a driverless future web site back in 2017. The site still exists but the last updates to it were made in late 2018. If you click on the predictions page you will see longer explanations of all these predictions that I gathered from it.

Each line is an individual prediction with the year it was made in parentheses. The orange arrows indicate that the companies later released updated later dates or cancelled their predictions completely, and that I personally noticed those updates. There may well be more that I have missed.

The years in blue indicate when the industry leaders thought these predictions would come to pass. I have highlighted all the dates up through 2021, now numbering 17 of the 23 predictions. Not one of them has happened or is even close to happening.

Hubris on a mass delusion scale. Audi fully autonomous by 2017? That is Teslan in its delusion level.

| Prediction [Self Driving Cars] | Date | 2018 Comments | Updates |

|---|---|---|---|

| A flying car can be purchased by any US resident if they have enough money. | NET 2036 | There is a real possibility that this will not happen at all by 2050. | |

| Flying cars reach 0.01% of US total cars. | NET 2042 | That would be about 26,000 flying cars given today's total. | |

| Flying cars reach 0.1% of US total cars. | NIML | ||

| First dedicated lane where only cars in truly driverless mode are allowed on a public freeway. | NET 2021 | This is a bit like current day HOV lanes. My bet is the left most lane on 101 between SF and Silicon Valley (currently largely the domain of speeding Teslas in any case). People will have to have their hands on the wheel until the car is in the dedicated lane. | 20210101 It didn't happen any earlier than 2021, so I was technically correct. But I really thought this was the path to getting autonomous cars on our freeways safely. No one seems to be working on this... 20220101 Perhaps I was projecting my solution to how to get self driving cars to happen sooner than the one for one replacement approach that the Autonomous Vehicle companies have been taking. The left lanes of 101 are being rebuilt at this moment, but only as a toll lane--no special assistance for AVs. I've turned the color on this one to "too optimistic" on my part. |

| Such a dedicated lane where the cars communicate and drive with reduced spacing at higher speed than people are allowed to drive | NET 2024 | ||

| First driverless "taxi" service in a major US city, with dedicated pick up and drop off points, and restrictions on weather and time of day. | NET 2021 | The pick up and drop off points will not be parking spots, but like bus stops they will be marked and restricted for that purpose only. | 20190101 Although a few such services have been announced every one of them operates with human safety drivers on board. And some operate on a fixed route and so do not count as a "taxi" service--they are shuttle buses. And those that are "taxi" services only let a very small number of carefully pre-approved people use them. We'll have more to argue about when any of these services do truly go driverless. That means no human driver in the vehicle, or even operating it remotely. 20200101 During 2019 Waymo started operating a 'taxi service' in Chandler, Arizona, with no human driver in the vehicles. While this is a big step forward see comments below for why this is not yet a driverless taxi service. 20210101 It wasn't true last year, despite the headlines, and it is still not true. No, not, no. 20220101 It still didn't happen in any meaningful way, even in Chandler. So I can call this prediction as correct, though I now think it will turn out to have been wildly optimistic on my part. |

| Such "taxi" services where the cars are also used with drivers at other times and with extended geography, in 10 major US cities | NET 2025 | A key predictor here is when the sensors get cheap enough that using the car with a driver and not using those sensors still makes economic sense. | |

| Such "taxi" service as above in 50 of the 100 biggest US cities. | NET 2028 | It will be a very slow start and roll out. The designated pick up and drop off points may be used by multiple vendors, with communication between them in order to schedule cars in and out. | |

| Dedicated driverless package delivery vehicles in very restricted geographies of a major US city. | NET 2023 | The geographies will have to be where the roads are wide enough for other drivers to get around stopped vehicles. | 20220101 There are no vehicles delivering packages anywhere. There are some food robots on campuses, but nothing close to delivering packages on city streets. I'm not seeing any signs that this will happen in 2022. |

| A (profitable) parking garage where certain brands of cars can be left and picked up at the entrance and they will go park themselves in a human free environment. | NET 2023 | The economic incentive is much higher parking density, and it will require communication between the cars and the garage infrastructure. | 20220101 There has not been any visible progress towards this that I can see, so I think my prediction is pretty safe. Again I was perhaps projecting my own thoughts on how to get to anything profitable in the AV space in a reasonable amount of time. |

| A driverless "taxi" service in a major US city with arbitrary pick and drop off locations, even in a restricted geographical area. | NET 2032 | This is what Uber, Lyft, and conventional taxi services can do today. | |

| Driverless taxi services operating on all streets in Cambridgeport, MA, and Greenwich Village, NY. | NET 2035 | Unless parking and human drivers are banned from those areas before then. | |

| A major city bans parking and cars with drivers from a non-trivial portion of a city so that driverless cars have free reign in that area. | NET 2027 BY 2031 | This will be the starting point for a turning of the tide towards driverless cars. | |

| The majority of US cities have the majority of their downtown under such rules. | NET 2045 | ||

| Electric cars hit 30% of US car sales. | NET 2027 | ||

| Electric car sales in the US make up essentially 100% of the sales. | NET 2038 | ||

| Individually owned cars can go underground onto a pallet and be whisked underground to another location in a city at more than 100mph. | NIML | There might be some small demonstration projects, but they will be just that, not real, viable mass market services. | |

| First time that a car equipped with some version of a solution for the trolley problem is involved in an accident where it is practically invoked. | NIML | Recall that a variation of this was a key plot aspect in the movie "I, Robot", where a robot had rescued the Will Smith character after a car accident at the expense of letting a young girl die. |

Hubris

From my comments above the previous table it is clear that I think there has been too much hubris around driverless cars. But as I went through updating which predictions had stood the test of time I realized that I too had been guilty of hubris in my predictions.

There are two predictions about the earliest date that something might happen that I am now sure will not happen. And it is not because of technical difficulty but because no one is working on them.

The first is about having dedicated lanes on freeways with special infrastructure installed that cars can interact with to make autonomous driving easier. Back in 1997 there was a project called the National Automated Highway System Consortium to do just that. But when the hubris of fully self-driving cars made the need for external help for AVs seem redundant, such projects disappeared.

The second is the idea of leaving your car at the entrance to a garage and having it drive itself to park in a much tighter space than you could do.

Both these approaches to getting some sort of autonomy deployed in just a few years seem entirely doable to me, and I still believe that. But the lure of full self driving (as in full self driving, not merely as a marketing ploy) drove everyone away from these approaches.

My hubris was to think that others would see things the same way and would work on them. Given that no one was working on them do I really deserve credit for predicting they wouldn’t happen for a while?

Personal Gloating, An Irresistible Sin!!

Here I go. For the last two years I have had a little rant that in April of 2019 the CEO of Tesla had said there would be one million autonomous Tesla taxis on the road by the end of 2020. Kai Fu Lee and I had agreed at the time to eat all such taxis on December 31st, 2020. I.e., actually ingest them. All of them. Of course there were zero such autonomous Tesla taxis on the road at the end of 2020. And a year later the number is still zero.

Note that in the last two weeks Tesla has agreed to eliminate the ability of drivers of their vehicles to play video games on the car’s display screen while the car is in motion. This was under regulatory pressure, indicating that “Full Self Driving” software is still not fully self driving. The latest spin from Tesla is that of course the words in the name of the software, “Full Self Driving”, could not possibly be interpreted to mean full self driving.

Defending Against Slings And Arrows, Another Irresistible Sin!!

Earlier this year someone used the fact that my prediction for when electric vehicles would account for 30% of automobile sales in the US as being no early than the year 2027 (see table above) as proof that I am a pessimist. Therefore, they said, my predictions about autonomous vehicles could not be trusted.

EV sales in the US were 1.7% of the total market in 2020 (up from 1.4% in 2019). We’ll need four doublings of that in seven years to get to 30%. It may happen. It may happen sooner than 2027. But not by much. It would be a tremendous sustained growth rate that we have not yet seen.

Robotics, AI, and Machine Learning

With respect to my predictions for AI and ML there are only three that come close to being in play this year, either in terms of work that was done that impacts my predictions, or the date is close to when I said that something would or would not happen. I have annotated the table below in those three places; the next big thing, change in perspective of how to measure AI success, and robots that can really get around in our houses in a general purpose way.

The Next Big Thing

Back in 2018 I predicted that “the next big thing”, to replace Deep Learning, as the go to hot topic in AI would arrive somewhere between 2023 and 2027. I was convinced of this as there has always been a next big thing in AI. Neural networks have been the next big thing three times already. But others have had their shot at that title too, including (in no particular order) Bayesian inference, reinforcement learning, the primal sketch, shape from shading, frames, constraint programming, heuristic search, etc.

We are starting to get close to my window for the next big thing. Are there any candidates? I must admit that so far they all seem to be derivatives of deep learning in one way or another. If that is all we get I will be terribly disappointed, and probably have to give myself a bad grade on this prediction.

So far the things that I see bubbling around and getting people excited are transformers, foundation models, and unsupervised learning.

Transformers provide a front end to deep learning that lets it handle sequential data rather than all input at once. This lets it be applied to natural language and to image sequences. We have seen tremendous hype about large language models using this mechanism. There is something going on there, but nothing as astounding or important as people imagine.

These language models are over interpreted by people as understanding what they are spitting out, especially when the press writes stories where they have cherry picked responses. But they come with incredible problems, including copyright violations, intellectual theft of code, and even outright life threatening danger when they find their way into consumer products. Tech companies have a real problem in rushing some of these systems to market.

Overall the will to believe in the innate superiority of a computer model is astounding to me, just as it was to others back in 1966 when Joseph Weizenbaum showed off his Eliza program which occupied a just a few kilobytes of computer memory. Joe, too, was astounded at the reaction and spent the rest of his career sounding the alarm bells. We need more push back this time around.

Foundation models are large trained models that start out as a basis for tuning particular applications. There has been some self important announcements with a sort of me too feel (“Hey, I produced a foundation model too!!”), which don’t amount to much of an intellectual contribution. If this turns out to be the next big thing I am going to have to rip off my mask of equanimity and revert to my natural state of being a grumpy old man.

Unsupervised learning is an idea that has been around for a long time. Not a big intellectual jump to want to get it into deep learning–may be a hard technical problem, but not an intellectual breakthrough this time around. (RIP Teuvo Kohonen who passed away last month.)

So, am I wrong, or was I just too optimistic about how soon the next big thing will show up? I’ve given myself another 28 years of reviewing my predictions so perhaps there is time for something real and big to show up, and then I’ll be able to claim that I was simply too optimistic…

Beyond The Turing Test And Asimov’s Laws

I start to see the serious technical press really trying to come to terms with judging whether systems are intelligent or not, and the number of articles questioning the Turing Test seem to be on the rise. Perhaps this is driven by the perceptions of what transformer based natural language systems can do. They are not intelligent but they can can fool an awful lot of the people an awful lot of the time.

As is often the case, Melanie Mitchell has a really good analysis, this time on the Turing Test and GPT-3. She is a renowned academic but she now also writes for Quanta Magazine.

Getting Around In A House

The first mass market robots that could get around reliably in ordinary people’s houses were introduced by my company iRobot at a two hundred dollar price point twenty years ago this year, in September of 2002. iRobot has now sold around 35 million Roombas and I am guessing that the follow on brands have together sold about the same number.

But we all know that Roombas could never be Rosie the Robot from the cartoon series The Jestsons. They are limited to a single flat floor and can’t get up high enough to really see objects that matter to humans in the houses that they bumble around in. One of my predictions is that A robot that can navigate around just about any US home, with its steps, its clutter, its narrow pathways between furniture, etc. won’t even be a lab demo until 2026, and not be deployed for real until 2030 and at low cost in 2035.

Amazon just made a good and necessary step towards this capability with release of the Astro home robot. I got to spend time with some Astros before they were announced and I was impressed with the technical capabilities and at their price for all that technology. Whether they will be a successful product or not I can not hazard a guess.

The impressive step that Amazon has made is in getting a really reliable SLAM (Simultaneous Localization And Mapping) system that can quickly build a very good map of a house without any help from humans. It starts up in an unknown place in an unknown environment, and within minutes has a solid useable map. It is still somewhat low to the ground but its camera on a mast lets it get views up to table top level. No way to handle steps yet, and it mostly avoids clutter, but this is definitely progress.

| Prediction [AI and ML] | Date | 2018 Comments | Updates |

|---|---|---|---|

| Academic rumblings about the limits of Deep Learning | BY 2017 | Oh, this is already happening... the pace will pick up. | 20190101 There were plenty of papers published on limits of Deep Learning. I've provided links to some right below this table. 20200101 Go back to last year's update to see them. |

| The technical press starts reporting about limits of Deep Learning, and limits of reinforcement learning of game play. | BY 2018 | 20190101 Likewise some technical press stories are linked below. 20200101 Go back to last year's update to see them. |

|

| The popular press starts having stories that the era of Deep Learning is over. | BY 2020 | 20200101 We are seeing more and more opinion pieces by non-reporters saying this, but still not quite at the tipping point where reporters come at and say it. Axios and WIRED are getting close. 20210101 While hype remains the major topic of AI stories in the popular press, some outlets, such as The Economist (see after the table) have come to terms with DL having been oversold. So we are there. |

|

| VCs figure out that for an investment to pay off there needs to be something more than "X + Deep Learning". | NET 2021 | I am being a little cynical here, and of course there will be no way to know when things change exactly. | 20210101 This is the first place where I am admitting that I was too pessimistic. I wrote this prediction when I was frustrated with VCs and let that frustration get the better of me. That was stupid of me. Many VCs figured out the hype and are focusing on fundamentals. That is good for the field, and the world! |

| Emergence of the generally agreed upon "next big thing" in AI beyond deep learning. | NET 2023 BY 2027 | Whatever this turns out to be, it will be something that someone is already working on, and there are already published papers about it. There will be many claims on this title earlier than 2023, but none of them will pan out. | 20210101 So far I don't see any real candidates for this, but that is OK. It may take a while. What we are seeing is new understanding of capabilities missing from the current most popular parts of AI. They include "common sense" and "attention". Progress on these will probably come from new techniques, and perhaps one of those techniques will turn out to be the new "big thing" in AI. 20220101 There are two or three candidates bubbling up, but all coming out of the now tradition of deep learning. Still no completely new "next big thing". |

| The press, and researchers, generally mature beyond the so-called "Turing Test" and Asimov's three laws as valid measures of progress in AI and ML. | NET 2022 | I wish, I really wish. | 20220101 I think we are right on the cusp of this happening. The serious tech press has run stories in 2021 about the need to update, but both the Turing Test and Asimov's Laws still show up in the popular press. 2022 will be the switchover year. [Am I guilty of confirmation bias in my analysis of whether it is just about to happen?] |

| Dexterous robot hands generally available. | NET 2030 BY 2040 (I hope!) | Despite some impressive lab demonstrations we have not actually seen any improvement in widely deployed robotic hands or end effectors in the last 40 years. | |

| A robot that can navigate around just about any US home, with its steps, its clutter, its narrow pathways between furniture, etc. | Lab demo: NET 2026 Expensive product: NET 2030 Affordable product: NET 2035 | What is easy for humans is still very, very hard for robots. | 20220101 There was some impressive progress in this direction this year with the Amazon's release of Astro. A necessary step towards these much harder goals. See the main text. |

| A robot that can provide physical assistance to the elderly over multiple tasks (e.g., getting into and out of bed, washing, using the toilet, etc.) rather than just a point solution. | NET 2028 | There may be point solution robots before that. But soon the houses of the elderly will be cluttered with too many robots. | |

| A robot that can carry out the last 10 yards of delivery, getting from a vehicle into a house and putting the package inside the front door. | Lab demo: NET 2025 Deployed systems: NET 2028 | ||

| A conversational agent that both carries long term context, and does not easily fall into recognizable and repeated patterns. | Lab demo: NET 2023 Deployed systems: 2025 | Deployment platforms already exist (e.g., Google Home and Amazon Echo) so it will be a fast track from lab demo to wide spread deployment. | |

| An AI system with an ongoing existence (no day is the repeat of another day as it currently is for all AI systems) at the level of a mouse. | NET 2030 | I will need a whole new blog post to explain this... | |

| A robot that seems as intelligent, as attentive, and as faithful, as a dog. | NET 2048 | This is so much harder than most people imagine it to be--many think we are already there; I say we are not at all there. | |

| A robot that has any real idea about its own existence, or the existence of humans in the way that a six year old understands humans. | NIML |

The Problem As I See It

I have often stated that I think the field of AI, despite the great practical successes recently of Deep Learning, is probably a few hundred years away from where most people think it is. We’re still back in phlogiston land, not having yet figured out the elements, including oxygen.

AI, Robotics, and Machine Learning are areas that I have a real personal investment in. I wrote a terrible Masters thesis on ML back in 1977. I joined the Stanford AI Lab later that year, then the MIT AI Lab four years later, and became director of that lab in 1997, merging it with LCS (Lab for Computer Science) to form MIT CSAIL in 2003, the largest lab at MIT, still today. I have founded six AI and robotics companies. After 45 years in the academic and industry trenches can I be unbiased? Probably not.

I know that many who disagree with me will dismiss me for all that experience that I have. Perhaps those who agree with me should also dismiss me for the same reason!!

My current belief is that it all gets back to the symbol grounding problem, and even more deeply to adopting a computational approach to AI, Robotics, and ML (and I expect almost no one will agree with that latter claim).

Traditional AI put off the very hard problem of perception, and assumed that later that would be solved and a perception system would deliver symbols describing the world. What are symbols? Well, a convenient abstraction was Lisp symbols, and Lisp symbols really come down to whether two things have the same address in computer memory (with some technical fixes for copying garbage collectors moving stuff around everywhere…), with a little bit of dictionary like descriptions of each symbol in terms of other symbols. (Real dictionaries used by real people always bottom out in the physical experience of those people; not so a Lisp program.) Perhaps that certainty of symbols and separation from perception, and even the metaphor of computation, has lead people astray in the way they try to solve the problems of common sense, qualitative reasoning, inference, etc. Many dear friends may take this as an attack on their work, but that is not how I mean it. I mean it as more general questioning.

Along came Deep Learning and many thought that the perception problem had been solved, and that DL gave us those symbols, recognizing objects in the world. But I don’t think that is right either. I think what DL has done is solve the labeling problem, not the object recognition problem. It gives good label, and it gives poor object. (And yes I meant label and object rather than labels and objects.)

If you want to expand your mind, try reading The Promise Of Artificial Intelligence by Brian Cantwell Smith. This is by far his shortest and most readable book from his forty years of producing them. It is exceptionally erudite. But it is still a hard slog, and even native English speakers will be consulting a dictionary quite often.

In this book Smith introduces the idea of registration, as a maintained relationship between an object outside of us and what goes on inside our head (and he would have it also in a classical computer) despite changes in perception and even context. It feels a bit like autopoiesis for thinking rather than Maturana and Varela’s conception of it for being. It is a hard concept to grasp and hold onto. It will be even harder to make it real. But perhaps that is what AI will need.

And yes this little section is obscure, but the best I can do for a rather short blog post.

Space

There are three big stories in the space industry from 2021, and they all impact one or more predictions that I made. The first is that space tourism notched up significantly from where it had been, both for sub-orbital and orbital trips. The second is that Boeing had another serious setback on launching people into space; I had said by 2022, now I think that is less likely to happen. And third, a lot of visibility into progress on SpaceX’s Starship (what was once called the BF Rocket) second stage progress in the first half of the year, and much less than people had expected for the first stage, with no launch yet.

Space Tourism Sub-Orbital

There were two players in each of sub-orbital and orbital space tourism. Virgin Galactic and Blue Origin for sub-orbital, and Roscosmos and SpaceX for orbital trips.

Virgin Galactic had two manned launches of their sub-orbital Unity each of which got to a bit less than 90km in altitude (note that way back in 2004 Virgin Galactic had three manned flights that got over 100km). The first of this year’s flights was with two professional pilots only, but then on July 11th two pilots were joined by four civilians, including billionaire founder Richard Branson. This flight was only announced after Jeff Bezos had stated his intention to get into space as the first sub-orbital tourist, and Branson rushed in and beat him by nine days. All six people on board were part of the Virgin Galactic organization, so none paid for their flights explicitly.

Blue Origin was a little like an actual tourism company in that all three of its manned sub-orbital space flights in 2021 had between one and four paying customers on board, though each launch also had non-paying celebratory guests. Jeff Bezos was on board the first of these flights which launched on July 20th, the 52nd anniversary of Armstrong and Aldrin landing on the Moon. A total of 14 people flew on these three flights, none of whom were professional space travelers. As best as I can tell six of those seats were paid for. Each of the Blue Origin flights topped 100km in altitude.

This is the first year that there have been paying customers on sub-orbital flights and it looks like six of them had paid seats, so not yet the few handfuls of paying customers that I predicted would not happen until at least 2022. So my prediction of no earlier than 2022 was correct. Blue Origin looks like it has a chance to get a few handfuls of paying customers in 2022, and we’ll see whether Virgin Galactic launches its first paying customer this coming year.

There is a question in my mind whether there will be enough appetite for these very short experiences of only about five minutes of weightlessness. The price per minute is much more than the rate for an orbital flight. Perhaps that will push the market more towards orbital tourism. In any case both the companies involved have much bigger space aspirations than just sub-orbital flights and may lose interest if either the demand or profit margins are not high enough. Sub-orbital tourism may never reach a breakneck pace as airplane pleasure flights did long ago–the market will decide.

Space Tourism Orbital

In 2021 we saw eight orbital space tourists, two of whom paid for their own rides, however all eight rides were paid for, unlike the sub-orbital cases. The only previous space tourists were eight orbital seats, spread over seven individuals, between 2001 and 2009. In this one year, 2021, we had a doubling of the number of all time orbital space tourists.

The first orbital tourist flight of 2021 was carried out in September by SpaceX, when four people launched on the third flight of a Falcon 9 booster, aboard the second flight of a Dragon capsule. They were aloft just under 72 hours, or three days. One of the people onboard paid for the flight, and the other three were his guests. This is the only fully commercial orbital flight ever to date.

All previous orbital tourist flights had been on Soyuz vehicles going to the International Space Station (ISS), operated by Roscosmos, the same organization that sends all other Russian manned flights aloft. The other four 2021’s tourists were on those same vehicles to the same destination, though three of the people were working for their flights. In October a Russian actor along with a movie director/camera operator to film her journeyed to shoot scenes for a movie. In December a Japanese billionaire, Yusaku Maezawa, along with his publicist, also went to the ISS. Both visits lasted 12 days. Maezawa is also signed up to loop around the Moon with SpaceX in 2023, delayed from the original announced goal of the fourth quarter of 2018.

Clearly 2021 was the best year ever for space tourism.

| Prediction [Space] | Date | 2018 Comments | Updates |

|---|---|---|---|

| Next launch of people (test pilots/engineers) on a sub-orbital flight by a private company. | BY 2018 | 20190101 Virgin Galactic did this on December 13, 2018. 20200101 On February 22, 2019, Virgin Galactic had their second flight, this time with three humans on board, to space of their current vehicle. As far as I can tell that is the only sub-orbital flight of humans in 2019. Blue Origin's new Shepard flew three times in 2019, but with no people aboard as in all its flights so far. 20210101 There were no manned suborbital flights in 2020. |

|

| A few handfuls of customers, paying for those flights. | NET 2020 | 20210101 Things will have to speed up if this is going to happen even in 2021. I may have been too optimistic. 20220101 It looks like six people paid in 2021 so still not a few handfuls. Plausible that it happens in 2022. |

|

| A regular sub weekly cadence of such flights. | NET 2022 BY 2026 | 20220101 Given that 2021 only saw four such flights, it is unlikely that this will be achieved in 2022. |

|

| Regular paying customer orbital flights. | NET 2027 | Russia offered paid flights to the ISS, but there were only 8 such flights (7 different tourists). They are now suspended indefinitely. | 20220101 We went from zero paid orbital flights since 2009 to three in the last four months of 2021, so definitely an uptick in activity. |

| Next launch of people into orbit on a US booster. | NET 2019 BY 2021 BY 2022 (2 different companies) | Current schedule says 2018. | 20190101 It didn't happen in 2018. Now both SpaceX and Boeing say they will do it in 2019. 20200101 Both Boeing and SpaceX had major failures with their systems during 2019, though no humans were aboard in either case. So this goal was not achieved in 2019. Both companies are optimistic of getting it done in 2020, as they were for 2019. I'm sure it will happen eventually for both companies. 20200530 SpaceX did it in 2020, so the first company got there within my window, but two years later than they predicted. There is a real risk that Boeing will not make it in 2021, but I think there is still a strong chance that they will by 2022. 20220101 Boeing had another big failure in 2021 and now 2022 is looking unlikely. |

| Two paying customers go on a loop around the Moon, launch on Falcon Heavy. | NET 2020 | The most recent prediction has been 4th quarter 2018. That is not going to happen. | 20190101 I'm calling this one now as SpaceX has revised their plans from a Falcon Heavy to their still developing BFR (or whatever it gets called), and predict 2023. I.e., it has slipped 5 years in the last year. 20220101 With Starship not yet having launched a first stage 2023 is starting to look unlikely, as one would expect the paying customer (Yusaku Maezawa, who just went to the ISS on a Soyuz last month) would want to see a successful re-entry from a Moon return before going himself. That is a lot of test program to get to there from here in under two years. |

| Land cargo on Mars for humans to use at a later date | NET 2026 | SpaceX has said by 2022. I think 2026 is optimistic but it might be pushed to happen as a statement that it can be done, rather than for an pressing practical reason. | |

| Humans on Mars make use of cargo previously landed there. | NET 2032 | Sorry, it is just going to take longer than every one expects. | |

| First "permanent" human colony on Mars. | NET 2036 | It will be magical for the human race if this happens by then. It will truly inspire us all. | |

| Point to point transport on Earth in an hour or so (using a BF rocket). | NIML | This will not happen without some major new breakthrough of which we currently have no inkling. | |

| Regular service of Hyperloop between two cities. | NIML | I can't help but be reminded of when Chuck Yeager described the Mercury program as "Spam in a can". |

Boeing’s Woes

NASA funded SpaceX and Boeing to develop two reusable capsules for launching NASA astronauts. Originally they were neck and neck on schedule. Each had some setbacks and things have taken longer than expected.

The SpaceX vehicle, Crew Dragon, first took people to space in 2020, and has now done so five times, including hosting the first non-Russian orbital tourist flight.

Boeing has not been so lucky. In their first unmanned orbital test flight in December 2019 there were serious software problems and the vehicle did not reach the ISS as planned. During an August 2021 launch window for a re-fly of the unmanned test, problems with valves deep in the craft were discovered and it was removed from the launch pad. Current plans call for a launch in May 2022, but that is by no means certain. Meanwhile NASA has reassigned crew members from each of the first two manned launches as those astronauts were getting stale waiting for Boeing to fix the problems. This is not a good sign.

Starship

SpaceX Starship (as distinct from Boeing Starliner) was an a very rapid launch, blow-up, try again, pace as 2020 turned into 2021. Starship will be the largest rocket ever flown and both its first and second stage are intended to be fully re-usable.

After a series of fiery flights which resulted in loss of vehicle, usually in spectacular explosions, SpaceX launched the second stage of Starship in early May 2021 and had it land softly back at the launch pad, remain standing, and survive without blowing up.

Attention shifted to the first stage and people were expecting it to launch soon, but it was not to be in 2021. Three times, a second stage has been mated to a first stage, and sometimes a first stage has fired some engines in a static test. So far, however, there has been no launch attempt. It thus remains to be seen whether the lessons from the second stage can lead to a faster test campaign for the first stage.

As usual with the CEO of SpaceX it is sometimes hard to discern fact from fantasy/trolling. On November 30th (in the middle of the Thanksgiving break) he warned of potential bankruptcy for SpaceX unless all hands were on deck to fix problems with producing enough Raptor engines for Starship’s first stage. In the linked story “according to Musk’s email, SpaceX needs to launch Starship at least once every two weeks next year to keep the company afloat”. Next year in that context is 2022.

As a comparison, SpaceX launched its first Falcon 9 in June 2010. They did not reach an annual rate of one launch every two weeks until 2020 with 26 launches. That was a ten year ramp up. So to go from zero launches to a run rate of one every two weeks by the end of the year seems rather ambitious even by SpaceX record breaking standards. [[The Falcon 9 launch rate in 2021 improved to once every 12 days.]]

An Astounding Historical Rocket Ramp Up

Incidentally, though by modern standards SpaceX develops and deploys at incredible speed, there is one historical instance of even faster rocket development, production, and deployment.

Werner Von Braun designed the V-2 rocket (originally designated A-4), and carried out the first test flight on October 3rd, 1942. That sub-orbital flight reached an altitude of 192km, roughly twice what Virgin Galactic and Blue Origin’s suborbital rockets have achieved.

The V-2 is the progenitor of all human spaceflight rockets, and the team that designed and produced them ended up as principals in both the Soviet and US space programs. Werner von Braun was the architect of the Apollo lunar landing program. However, back in 1942 he promoted the V-2 as a weapon, and by December of 1942 Hitler ordered them into mass production.

Over the next two years there were more than 300 further test flights, and then in the last few months of the war, starting on September 8th 1944 in an attack on newly liberated Paris, Germany launched over 3,000 of them on operational warhead delivery missions. Three thousand operational flights in eight months.

Some thoughts on “The Problem As I See It”. Keep in mind I’m using some broad strokes here and speaking of the AI community and its trends as a bit of a monolith. There will of course be groups/papers at the margin that buck the trend.

I,

My guess is that the problem of grounding is closely linked and perhaps dependent on embodiment in the real world. Much of the “common sense” that we repeatedly hear is missing from large language models is not written down anywhere because it would be redundant. We, as embodied agents in the physical world, observe these examples all the time but never or seldom talk about them or write them down. They are, as the name reveals, common and uninteresting.

There is the further issue of modality: the domain much of this common sense is multi-modal, combining our entire sensory input and the immediate detection and abstraction systems that follow — produce whatever internal representation/symbols are used. This is a place far removed from the kingdom of words.

You’ve argued for this yourself in “Intelligence without Reason”, but there’s still a large part of the world’s AI resources dedicated to learning intelligence through words. There are however groups working on embodied intelligence, often employing and training RL systems. Unfortunately, whatever common-sense knowledge is deduced by these systems will be incomprehensible to us, lest it be combined with whatever the large language models are learning. I’m hoping for more successful combination of embodied and language-based systems.

II.

Much has been made of the inability of ANNs to reason, but I don’t know if it’s clear to everyone what that means. My prediction is that we will only be satisfied that AI systems are reasoning when they are able to explain the process to us. The outcome is otherwise indistinguishable. At a very fine scale, ANNs are reasoning already, but this reasoning is completely opaque to us.

What would communicated reasoning look like? Not just explanations, but requests for information and clarification and expression of doubt or certainty — all using symbols we understand, grounded in a shared universe.

I think this is a hard problem for the academic community to solve because the solution is a system and not an algorithm.

III.

For all of this to be convincing to us, I suspect the AI’s methodology and communication must all be causally aware. Although it is possible to deduce causality from observation, it is not easy and requires making assumptions that are causally aware (such as SEMs or causal diagrams). At some level, intervention is necessary which brings us full-circle to embodiment.

It is not clear to me that our current correlational approach to AI will achieve this. Even when intervention is possible, such as in the case of embodied reinforcement learning, I seldom see these interventions used to infer causal models. I suspect we will see more of this after it is expected of AI systems to produce credible explanations for their reasoning, not before.

I think two additional prediction categories that would be very interesting are:

* The Tesla FSD technology (or any strictly computer-vision driver) achieving a safe, proveable driverless car in a non-trivial portion of a major U.S. city.

* When a humanoid robot assistant like Tesla Bot will be useful and on the market, at any price.

FSD uses such a different tech approach to autonomy than the other AV companies, and they market their tech with terms that imply autonomous capability, so I think they deserve their own category.

Based on social media content, I think possibly tens of thousands of Tesla fans think FSD will be ready by 2022-2023, and of course they have been saying it will be ready every year since about 2018. There are many hundreds of amateur “FSD Beta testers” on U.S. roads every day, with many other drivers feeling they are a hazard. Many Tesla fans think FSD is the great hope to improve driving safety and availability. Soon there could be thousands of these Beta testers on public roads. I hope Rodney Brooks agrees that what they are doing should be addressed by experts. Many of the same people think a useful Tesla Bot will soon follow their FSD robotaxi.

I know lots of people, including Lex Fridman, ask Rodney about these programs. On the Lex podcast, he gave an answer, but rather indirectly. It would be great to have a clear prediction of how he sees the potential of these programs, with a NET date on each.

Rod, on driverless cars, what do you think about Mercedes-Benz’s new Drive Pilot feature?

Briefly, it deals with bumper-to-bumper traffic on a freeway. This is an SAE “Level 3” feature, i.e., you can look away and do other things, but the self-driving works only within a specific set of conditions on specific roads, and the car will notify you when it’s time for you to take over.

As I wrote on my blog, I thought this is an important milestone for self-driving cars. It’s not an AI milestone, but an engineering milestone of practical significance. Not something that VCs would be interested in but real-world drivers would pay for.

Great reading again this year!

Here’s another category, somewhat similar to the restricted use cases for autonomous cars (parking, special highway lanes, etc.): autonomous tractors. John Deere says that they have their D8 tractor ready (https://www.engadget.com/john-deere-autonomous-tractor-203054726.html). This also seems similar to mapping a room for robot maneuvering, and a case where there’s a party interested enough (the farmer) to invest time and effort into making the map accurate, or willing to place special signs in strategic places in the field.

Here’s the latest paper I’ve found that points out weaknesses in deep learning:

https://arxiv.org/abs/2003.08907

The weakness the authors identify (and name) is “overinterpretation”, in short, the ability of vision models to correctly classify an image from a subset of its pixels that have no obvious relevance to the identified class. Such pixel subsets are often small and found at the borders of images. This is also a weakness in staple benchmark datasets, in particular ImageNet and Cifar-10. They give an example of the category “pizza” on ImageNet, where every image shows a pizza on a wooden table and where different models learn to identify a pizza only from the pixels of the wooden table.

I wasn’t 100% convinced by the authors’ experiments- they test a bunch of vision models by replacing up to 95% of the pixels in images and then training models on those, let’s say, reduced images. They select the 10 to 5% of pixels to keep from each image using a gradient descent-based variant of an approach originally proposed to explain the decisions of vision models (SIS for Sufficient Input Subsets). So they basically select parts of images that they know vision models to focus on when performing a classification. They test their trained models also on reduced images and find that the trained models can classify the altered images with high confidence, but I’m left wondering how well the same models would do on the full, un-reduced images, which is nowhere to be found in the paper and its accompanying materials. They do find that training on a random selection of 5-10% of the original images causes performance to degrade but while that removes an obvious objection about cyclical thinking, the paper is still missing a smoking gun.

Still, there’s evidence of something obviously wrong on all levels going on. Important benchmark datasets are broken and the models learned from them are also broken. Deep learning appears broken, both as a set of approaches to machine learning, prone to over-specialisations and incapable of useful generalisation, and as a field of research that relies on uninformative benchmarks by now “done to death” many times over.

I appreciate your prediction about the Next Big Thing™ in machine learning. Geoff Hinton has said that the Next Big Thing will come from a graduate student who is right now busy doubting everything that Geoff Hinton has ever said. I’m sorry that this will not be me- I haven’t heard _everything_ that Geoff Hinton has ever said.

Jokes aside, I’m concerned that the current generation of machine learning researchers will keep flogging a dead horse, for ever reaching for Andrew Ng’s “low-hanging fruit”, leaving their students, or their students’ students, to pick up the pieces. I fear, that is, that there will not be a “Next Big Thing” in machine learning for many more years, considerably longer than your prediction. Research is slowly sinking into a tar pit of meaningless incremental work and it will take extreme effort to shake it free.

Perhaps I should say that I find many of your prdictions extremely optimistic 🙂

“The Problem As I See It”

I think this book presents a better way of understanding the nature of intelligence: Mind in Life: Biology, Phenomenology, and the Sciences of Mind, by Evan Thompson. It has much in common with your views and the views of Smith, Varela, and Maturana (the latter two are often cited by Thompson). It is quite a challenging read, but worth the effort.

Thoroughly enjoyed reading your views. I will say there is at least one company – Cavnue (name derived from connected & automated vehicle avenues) – working to simplify and enhance the operational domain for automated vehicles. Dedicated lanes are part of this vision, but there is much more that infrastructure can do for these on-board technologies, “physically” and “digitally”. (Full disclosure: I was part of the founding of that company.)

Delivery vehicles:

In Russia we have State postal delivery in collaboration with Yandex that functions on city streets. https://www.pochta.ru/robodelivery

I think it’s nothing but a gimmick as we do not need any automation here at all given our cost of labor (median salary is about 300 bucks) and these robots can’t use elevators or climb stairs, and they have live operators for difficult cases, but I wanted to point out that you focus only on US, while China might be ahead in the AI craze.

Very nice. Good that you are doing your bit to maintain some perspective in the AI field.

I agree with the assessment of key problem in AI as being, broadly, symbol grounding.

Contrasting Deep Learning as solving “the labeling problem, not the object recognition problem” is a good way to put it.

I interpret the contrast to be novelty. Labels are not novel. The unsolved problem is the recognition, and creation, of novel “objects”.